Quick Overview

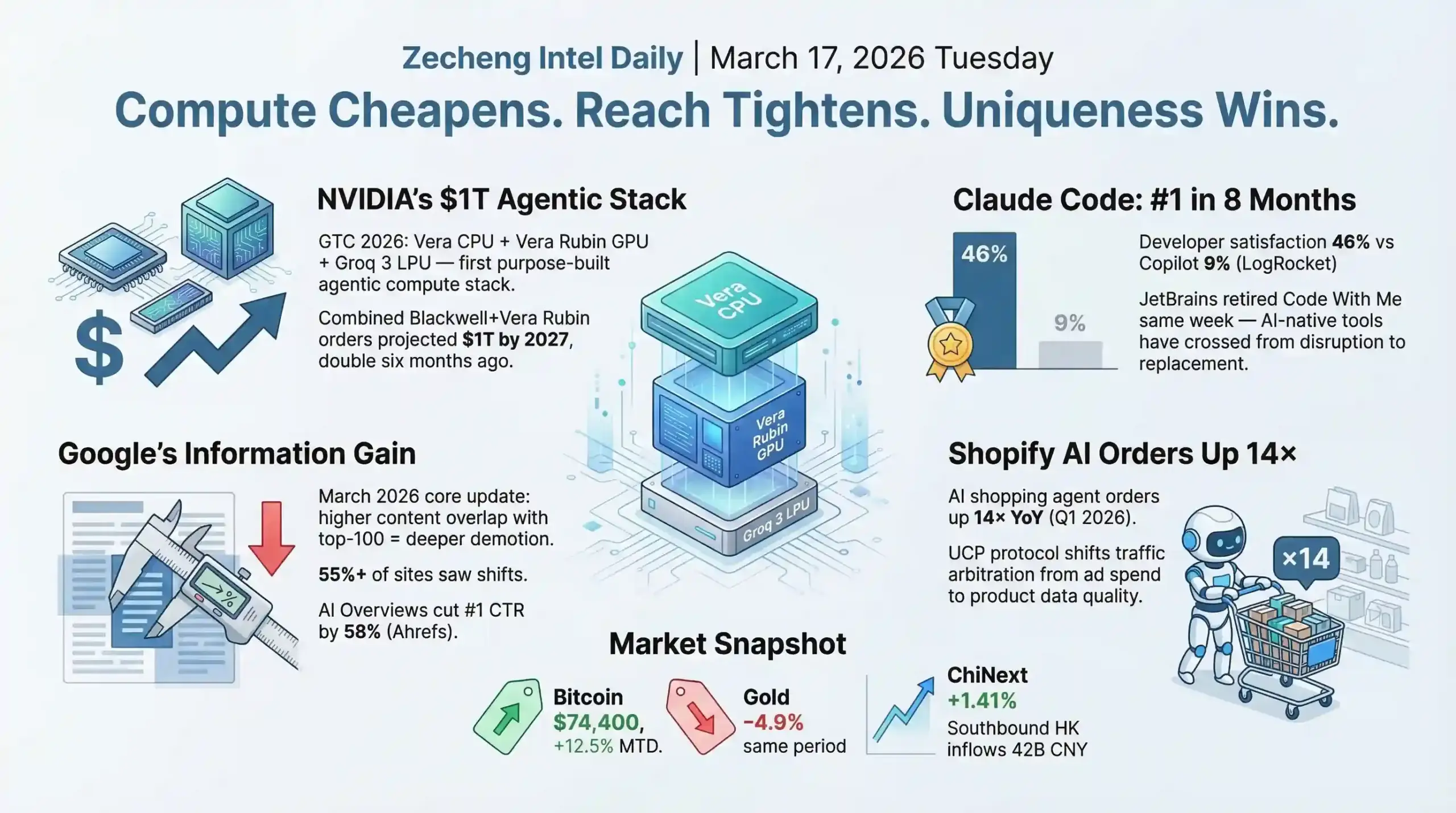

- AI & Builders: NVIDIA GTC 2026 raised the Blackwell+Vera Rubin combined order projection to $1 trillion by 2027 — double the estimate from six months ago — with Vera CPU and Groq 3 LPU forming a three-layer compute architecture purpose-built for agentic AI (source: CNBC/NVIDIA Blog); Claude Code hit #1 among AI coding tools in 8 months with 46% developer satisfaction vs GitHub Copilot's 9% (source: LogRocket).

- SEO & Search: Google's March 2026 core update introduces the Information Gain Metric, scoring content based on informational overlap with the existing top-100 results — 55%+ of sites saw ranking changes, YMYL recovery timelines stretch 6–12 months, and AI Overviews now cut the #1-ranked page's CTR by 58% (source: Ahrefs/SEMrush).

- Startups & Reddit: Shopify AI agent-driven orders grew 14x year-over-year as the Universal Commerce Protocol (UCP) — co-built with Google, Walmart, and Target — shifts e-commerce discovery from ad spend to product data quality; the US 15% universal tariff under Section 122 took effect in March 2026, structurally breaking Shein and Temu's small-parcel duty-exemption model (source: TechCrunch/Tax Foundation).

AI & Technology

Headline: Jensen Huang's $1 Trillion Bet — GTC 2026 Full Breakdown

Jensen Huang stood on stage at GTC San Jose on March 16 and doubled his own projection. NVIDIA (NVDA) now expects combined Blackwell and Vera Rubin purchase orders to exceed $1 trillion through 2027 — that's twice the $500B figure from six months ago (source: CNBC / NVIDIA Blog). Numbers like that don't land unless there's something structural supporting them, so let's pull apart what NVIDIA actually announced.

The centerpiece is Vera CPU, which NVIDIA calls "the world's first processor purpose-built for the age of agentic AI and reinforcement learning." Specs: 88 custom Olympus cores with Spatial Multithreading (each core runs two tasks simultaneously), LPDDR5X memory, 1.2 TB/s bandwidth — twice traditional rack-scale CPUs — at half the power draw. The interconnect to GPUs via NVLink-C2C delivers 1.8 TB/s coherent bandwidth, 7x PCIe Gen 6 (source: NVIDIA Newsroom). A Vera CPU rack holds 256 liquid-cooled units supporting 22,500+ concurrent CPU environments at full performance.

Already signed up as customers: Alibaba, ByteDance, Meta, Oracle Cloud, CoreWeave, Lambda, Together.AI. Two Chinese hyperscalers on that list despite tariff turbulence and geopolitical noise — compute procurement logic has not yet fragmented along national lines. That's the data point I keep coming back to.

Then there's Groq 3 LPU. NVIDIA acquired Groq for $20B in December and this is their first chip from that deal, shipping Q3 2026. The Groq 3 LPX rack holds 256 LPUs and is designed to sit physically beside the Vera Rubin GPU rack. What NVIDIA is building here is a three-layer compute architecture: Vera CPU for agentic orchestration, Vera Rubin GPU for training and inference, Groq 3 LPU for ultra-fast token generation. This isn't a chip company selling components. It's vertical integration of the entire agentic AI compute stack.

The robotics piece got less coverage but tells a bigger story. A robot playing Olaf from Frozen walked and talked on stage — trained inside NVIDIA's joint simulation environment with Disney. NemoClaw, the open-source agent deployment stack (DGX Spark + DGX Station + NemoClaw), is now enterprise-ready through NemoClaw Reference, with OpenClaw integration making it the enterprise entry point for the OpenClaw ecosystem.

My read: GTC 2026 was NVIDIA announcing that it intends to own the full stack from silicon to agentic AI deployment to robotics. Whether $1 trillion in orders actually materializes by 2027 depends on how fast enterprises actually deploy agents at scale — and that's still a real question.

Builder Insights

Claude Code #1, JetBrains Surrenders — the AI Coding Tool Endgame is Here

Claude Code went from zero to the most-used AI coding tool in 8 months (released May 2025), overtaking GitHub Copilot and Cursor (source: LogRocket / NxCode / DEV Community). Developer satisfaction sits at 46% for Claude Code versus 19% for Cursor and 9% for GitHub Copilot. It defaults to Claude Sonnet 4.6, which benchmarks at 79.6% on SWE-bench.

The same week, JetBrains announced it is sunsetting Code With Me — their collaborative coding feature — with 2026.1 as the last supported release. Public relays shut down Q1 2027. No new features, sales discontinued (source: JetBrains Platform Blog / Neowin). JetBrains had already cancelled Fleet IDE separately. Their statement was candid: they'll keep investing in remote development capabilities, but collaborative coding as they knew it has been made obsolete.

This is the pattern playing out in slow motion: AI-native tools like Claude Code and Cursor didn't just add features on top of existing IDE workflows, they redefined what "collaborative coding" means. When an AI agent can work alongside you asynchronously across multiple files, synchronous real-time collaboration features become a niche edge case. JetBrains isn't failing — they're just updating their product map to acknowledge where the world actually is.

Windsurf Wave 13 added Arena Mode: side-by-side model comparison with hidden identities and voting. This is genuinely clever product design — the IDE itself becomes a live model evaluation platform, and Windsurf gets better signal on which models work best for which tasks. Cursor 2.0 shipped a 4x faster Composer model, multi-agent interface supporting up to 8 parallel agents, and Plan Mode with editable Markdown. The whole ecosystem is converging on the same architecture: AI plans, AI executes in parallel, human reviews.

OpenAI Codex Security: 1.2 Million Commits Scanned, 10,561 High-Severity Findings

OpenAI launched Codex Security on March 6, entering the application security space exactly 14 days after Anthropic did the same with Claude Code Security. Beta results: scanned 1.2 million commits, surfaced 792 critical findings and 10,561 high-severity issues, with false positive rates falling 50%+ across all repositories (source: The Hacker News / OpenAI Blog).

The technical approach matters here. Traditional SAST tools use pattern matching and rule libraries — they're "structurally blind" to entire vulnerability classes that require understanding code intent and project context. Codex Security builds a deep contextual model of the entire project before reasoning about potential vulnerabilities. OpenAI published a detailed explainer on why their approach doesn't include a SAST report — a useful case study in how LLM reasoning enables entirely different product categories rather than just incrementally better versions of existing tools.

Available now in research preview for ChatGPT Pro, Enterprise, Business, and Edu customers — free for one month. The competitive dynamic here is clear: both Anthropic and OpenAI see enterprise security as a beachhead for deeper developer platform adoption.

Other AI Updates

Mistral Leanstral: $36 Beats $549 — Specialized Models Are Cutting Into General-Purpose Territory

Mistral released Leanstral on March 16 — "the first open-source code agent designed for Lean 4," their description (source: Mistral AI Official Blog). The model has 6B active parameters, sparse architecture, Apache 2.0 license. On the ProofNet benchmark: pass@2 score of 26.3, beating Claude Sonnet by 2.6 points, at $36 per run versus Sonnet's $549 — roughly a 15x cost difference. At pass@16 it reaches 31.9, 8 points ahead of Sonnet. It also outperforms Qwen3.5 397B (score 25.4 at ~$100 for 4 passes) and GLM5 744B (score 16.6).

Lean 4 is a formal theorem prover used in frontier mathematics research and mission-critical software verification. The bottleneck isn't computation — it's human verification time. Leanstral targets that bottleneck directly. The market is small but defensible: if you can do formal proof completion reliably, there's no real alternative. Available via free API endpoint (labs-leanstral-2603), integrated into Mistral Vibe for zero-setup use, self-hosting supported.

The pattern Leanstral represents is worth sitting with: task-specific models are taking individual use cases away from general-purpose frontier models one by one. The cost structure of narrow, highly specialized models makes previously uneconomical AI workflows viable — and that changes the build calculus for anyone putting together an AI product stack.

xAI Rebuild: Musk Says "Not Built Right the First Time," 9 of 11 Co-founders Gone

On March 12, Elon Musk publicly acknowledged that "xAI was not built right first time around, so is being rebuilt from the foundations up" (source: Fortune / CNBC / Electrek). Nine of the original 11 co-founders have departed — two last week, four the month before, plus roughly 12 senior engineers. Macrohard, the flagship white-collar AI agent project, is paused after its just-appointed leader Toby Pohlen left shortly after taking the role.

Musk is now recruiting from Cursor — he hired Andrew Milich and Jason Ginsberg — and publicly apologized to previously rejected candidates. SpaceX acquired xAI in February at a combined valuation of ~$1.25 trillion. The $230B E-round investors include NVIDIA, Cisco, Valor Equity, Fidelity, the Qatar Investment Authority, and MGX.

The interesting thing here isn't that a well-funded company admitted architectural problems — it's where Musk is recruiting to fix it. Going to Cursor specifically signals what xAI thinks it needs: people who know how to build AI agent tooling that developers actually want to use. The Macrohard project — AI for white-collar work — is paused but not cancelled. That category is where Anthropic, OpenAI, and now xAI all want to be. Whether xAI can rebuild fast enough while competitors ship daily is the real question.

Anthropic vs. Pentagon: Refusing Weaponization Made Claude Go Viral

Anthropic filed a lawsuit on March 9 in the U.S. District Court for the Northern District of California against the Trump administration (source: CNBC / TechRadar / Axios). The sequence: Anthropic refused to let Claude be used for autonomous weapons systems or mass domestic surveillance. Defense Secretary Pete Hegseth designated Anthropic a "supply chain risk to national security." Trump directed federal agencies to immediately stop using Anthropic technology. Agencies that dropped Claude: State Department (switching to OpenAI GPT-4.1), Treasury, HHS, Secret Service, and several defense contractors.

Anthropic's lawsuit calls the actions "unprecedented and unlawful" and argues irreparable harm.

The outcome so far has been the opposite of what the blacklist intended. Claude hit #1 on the U.S. App Store on March 1. Free users have grown 60%+ since January. Run-rate revenue now exceeds $14B annually. Customers spending $100K+/year grew 7x in the past year.

The mechanism here is instructive. Anthropic's refusal to weaponize Claude wasn't framed as a political statement — it was a product safety position. That framing turned a government contract dispute into a consumer values story, which played enormously well with the developer and general public audience that actually drives Claude's growth. Meanwhile, OpenAI accepted the government business Anthropic left on the table. Whether that turns out to be the right call commercially depends on how the lawsuit resolves and whether federal AI procurement practices shift under political pressure.

Scientists Are Asking Whether to Hire AI Instead of Graduate Students

Associate Professor Ariel Rosenfeld of Bar-Ilan University wrote in Science AAAS that he is seriously considering routing new research tasks — literature synthesis, code writing, model training, statistical analysis — to AI rather than bringing on graduate students (source: Science / AAAS). His most uncomfortable admission: he probably wouldn't recruit his younger self today.

His concern isn't that AI replaces grad students wholesale. It's that "the default assumption of taking on students will quietly erode." The piece sparked extensive Hacker News discussion about systematic implications for academic pipelines — who enters research if the apprenticeship model at the base of the pipeline shrinks?

The structural point extends well beyond academia. Graduate student onboarding was always partly about absorbing high-value but time-intensive work that professors couldn't justify doing themselves. AI has now made that trade-off calculation explicit in a way it wasn't before. The same calculation is playing out in law firms, consulting practices, and software teams — not "will AI replace everyone" but "will it change who we hire at the entry level, and what happens to the people who would have filled those roles."

Analysis

Three things happened on March 16 that connect.

NVIDIA bet $1 trillion on agentic AI infrastructure. OpenAI and Anthropic both shipped AI security tools within two weeks of each other. Claude Code took #1 in developer tooling while JetBrains retired its collaborative feature. These aren't separate stories — they're the same story at different layers of the stack.

The infrastructure layer (NVIDIA's Vera CPU, Groq 3 LPU) is betting that agentic AI deployments will scale to the point where existing compute architectures become a bottleneck. The tooling layer (Claude Code, Cursor 2.0, Codex Security) is already proving that AI agents running complex multi-step tasks are the real use case, not chatbots. The application layer is figuring out where agents get deployed first — and security code review and white-collar knowledge work are the current answers.

Mistral's Leanstral is a signal that the economics of specialized models are getting interesting. A 6B-parameter model beating frontier models by 15x on cost in a narrow domain is not a fluke — it's the result of focused training data and task-specific evaluation. For anyone building products on AI, this shifts the question from "which foundation model do I use" to "which foundation model is actually calibrated for this exact task."

The xAI situation is the outlier that makes the trend legible. Musk admitting architectural failure and recruiting from Cursor tells you what the builders who got the agentic AI tooling right are worth right now. The talent flowing from Cursor to NVIDIA to xAI is mapping the competitive battlefield more clearly than any press release.

Business & Startups

AI Shopping Agents Are Replacing the Pay-to-Win Model of E-Commerce Discovery

Shopify's AI agent-driven orders grew 14x year-over-year as of Q1 2026 — and Shopify is actively building the infrastructure layer to make that permanent (source: TechCrunch/Retail Brew, March 16, Harley Finkelstein).

The Universal Commerce Protocol (UCP), co-developed by Shopify, Google, Walmart, and Target, is the structural piece. It lets AI shopping agents read a merchant's full commerce layer — product catalog, loyalty programs, discount codes, subscription terms — and complete purchases autonomously. Finkelstein's key framing: this creates "merit-based shopping." Agents rank products on actual attributes, not who paid more for ad placement. A small brand with clean data, genuine reviews, and strong product detail pages can compete against a major retailer without outspending them.

"You can't just scrape information and expect a great experience," Finkelstein said. The merchants who show up well in agent-driven discovery are the ones who've invested in structured, machine-readable product data. That's not a marketing task — it's an infrastructure task.

Simultaneously, Google is testing "Sponsored Shops" in Shopping results: blocks that group multiple products from a single retailer into one branded unit, with store name, ratings, and product assortment all visible before a user clicks (source: Search Engine Land, PPC specialist Arpan Banerjee, LinkedIn). Instead of competing SKU-by-SKU on bid price, brands compete on catalog depth and brand trust signals.

Put these two together: the traditional e-commerce funnel — search keyword → compare product listings → click cheapest option — is being replaced by two new entry points. AI agents that know preferences and make contextual recommendations. Store-level branded placements that evaluate merchants holistically. Both reward the same things: product data quality, brand signal clarity, ratings authenticity.

The tariff backdrop makes this transition feel urgent rather than gradual. The Trump administration's 15% universal tariff under Section 122 took effect in March 2026 (Treasury Secretary Scott Bessent confirmed; source: Tax Foundation/Avalara). De minimis ($800 threshold) exemption remains suspended. The EU adds €3 duty on sub-€150 parcels starting July 2026. Shein and Temu's entire model was built on small-parcel duty exemptions — that model is structurally broken now. For US-based DTC brands sourcing from China, cost structures shifted materially. Apparel brands are getting hit hardest.

The businesses positioned well for 2026 aren't the ones with the lowest landed cost. They're the ones with the cleanest product data, most genuine brand signals, and supply chains that aren't entirely China-dependent. What Finkelstein calls merit-based shopping, the tariff data is forcing by necessity.

Reddit Pain Point Analysis

Two recurring tensions are surfacing in builder communities this week, and they both point at the same underlying anxiety.

The vibe coding credibility problem. A builder shared a browser flight simulator with real-world scenery on r/SideProject — shipped in a weekend using AI tools. The most-voted response: "Almost every impressive-looking vibe-coded demo is simply open source code being parroted by the LLM, then the slop author pats themselves on the back for putting together a half-assed spaghetti code prototype without understanding that all the hard work was done by other people." This captures something real. The tools are powerful enough to produce demos that look built. The community increasingly asks: what does "I built this" actually mean when an AI wrote 90% of the code? Attribution, genuine understanding, and craftsmanship are the open questions.

The AI wrapper problem. Google and Accel reviewed over 4,000 startup pitches for their India Atoms accelerator and found approximately 70% were "AI wrappers" — thin products with no proprietary moat beyond an API call (source: TechCrunch). They selected 5 companies, none fitting that pattern. This mirrors what's being discussed on r/SaaS and r/startups constantly: building something with AI is easy now. Building something defensible is not. The gap between "we use AI" and "AI is our competitive advantage" is wide, and the startup community is learning to see through the former.

Both tensions converge on the same question: as the cost of building something drops toward zero, what actually creates value? The answer the data keeps pointing to — domain expertise, real distribution, genuine user problems — hasn't changed. Only the surface layer has.

Builder Updates

@Jeremy_Horowitz (Shopify ecosystem analyst) posted a breakdown of the current Shopify landscape: roughly 3.6 million stores worldwide (+24% YoY), with approximately 125,000 generating over $1M in annual revenue (+26%) and about 69,000 on Shopify Plus (+81%). That last number is the signal — Plus growing at 81% means merchants with real revenue are accelerating their consolidation on Shopify's platform. When you layer in the 14x AI agent order growth, Shopify's infrastructure bet starts looking like a strong platform play with deep merchant lock-in. The 2024 verified numbers confirm the scale: $292.3B GMV (+24%), $8.88B revenue (+26%), 875M+ consumers transacting on-platform.

Chamber (YC W26) launched with a focused thesis: 30–60% of enterprise GPU capacity sits completely idle despite billions in hardware investment. Their platform acts as an autonomous infrastructure team — monitoring clusters, predicting demand, detecting bad nodes, reallocating in real time. Average outcome: companies run 50% more workloads on existing hardware without adding headcount. Founded by ex-Amazon infrastructure veterans who delivered hundreds of millions in cost savings at scale. The GPU waste problem is real and well-documented; Chamber's bet is that the solution is agentic orchestration, not hardware procurement.

ProductHunt & Indie Highlights

Donely launched this week with a notable pricing hook: "Your own OpenClaw instance for $0/month + free AI usage." For indie hackers building agent workflows, the no-cost entry point directly challenges the assumption that AI infrastructure has to be expensive. The OpenClaw ecosystem is accelerating faster than most expected — ByteDance, Tencent, and Alibaba have all launched enterprise variants within weeks of each other. MiniMax's stock ran 488% after being named an official OpenClaw model provider, pushing its market cap past Baidu. The open-source layer and the enterprise layer are converging faster than most predicted — Donely is betting the independent builder layer follows the same curve.

Key Takeaway

The e-commerce playbook is being rewritten from two directions at once. On the demand side, AI shopping agents and store-level Google placements are replacing keyword-driven product discovery — and both systems reward data quality and brand trust over ad spend. On the supply side, the 15% tariff regime and de minimis suspension have permanently changed the unit economics for cross-border supply chains.

Antonio Gracias, early backer of Tesla and SpaceX, called startups built to thrive in chaos "proentropic" at the Upfront Summit on March 16 (source: TechCrunch). The concept maps directly onto what's happening in e-commerce right now: operators who built for stable conditions — cheap tariffs, predictable ad auctions, de minimis loopholes — are under maximum pressure. The ones who built for resilience from the start are watching their competitive position improve by default.

The Shopify AI agent numbers (14x order growth), the Google Sponsored Shops test, and the tariff-driven supply chain restructuring are not three separate stories. They are one structural transition. The merchants who understand this early enough to restructure their product data, brand positioning, and supply chains accordingly are the ones who will look very smart in 12 months. The ones who wait for clarity before acting will find the window already closed.

SEO & Search Ecosystem

Google March 2026 Core Update: Information Gain Metric Is Now Scoring Your Content

Google's March 2026 core update is enforcing a new ranking signal called the Information Gain Metric, powered by the Gemini 4.0 Semantic Filter. The mechanism: Google calculates the informational overlap between your content and the existing top-100 results. The higher the overlap, the deeper the demotion. This is the technical underpinning of the update — not just another E-E-A-T enforcement sweep, but a semantic deduplication layer applied at scale.

Third-party monitoring across SEO Vendor, Search Engine Journal, and Quantifimedia puts the impact at 55%+ of sites seeing visible ranking changes within two weeks of rollout. The biggest losers: sites chasing trending topics outside their established expertise (drops up to 60%), and thin finance affiliate or coupon sites (systematic deindexing in some cases). YMYL verticals — health, insurance, finance, legal — took the concentrated hits. Recovery timelines for YMYL sites are estimated at 6 to 12 months, which makes this cycle unlike typical core updates where recovery can happen in weeks.

Winners are clearly identifiable too. E-E-A-T-strong ecommerce sites gained 23% in visibility. Among current top-ranking content, 73% comes from creators with verifiable professional expertise in their subject area (source: SEMrush / Quantifimedia). Author signals — credentials, bylines, about pages — are now explicit ranking inputs, not background factors.

The technical dimension compounds the pressure. Sites with Core Web Vitals issues saw 47% of them experience ranking drops in this cycle, with LCP, INP, and CLS all carrying increased weight. For sites that have been deferring technical work, this update makes the cost visible in rankings.

AI Overviews is adding a second layer of pressure. When an AI Overview appears, the #1-ranked page sees a 58% drop in CTR (Ahrefs). Across all U.S. Google searches, roughly 60% end without any click (Pew Research Center, Ahrefs). The total addressable click pool is shrinking. This isn't a future concern — it's the current baseline that SEO strategies need to price in.

For sites where Google's March 2026 core update caused drops, the diagnostic priority list runs: check Information Gain (are you saying something that isn't already in the top results?), check author credibility signals, check Core Web Vitals, then check content freshness in YMYL areas.

Builder Insights

Adam Enfroy on Pinterest as an AI-Powered Visual Search Engine

Adam Enfroy's latest video reframes how to think about Pinterest for affiliate traffic. His core thesis: Pinterest is not a social platform — it's a visual search engine with purchase-intent queries, structurally similar to Google but with far less competition on high-value terms. The platform has over 500 million monthly active users as of 2026, and Pinterest's own data shows 85% of weekly users have made a purchase based on something they saw on the platform.

The AI automation angle is where it gets practical. He walks through using Google's new tool Clelli to auto-generate Pins at scale, paired with AI-assisted keyword research to target high buyer-intent queries in evergreen niches — home decor, personal finance, health and wellness. His program selection filter: look for affiliate offers paying $50+ per sale, or recurring commissions on subscriptions. Single-digit commission programs don't scale. The broader framework — treating any visual platform with search functionality as an SEO channel — translates beyond Pinterest to YouTube Shorts and TikTok search (source: Adam Enfroy YouTube, March 2026).

Semrush One and Ahrefs Brand Radar Launch the Same Week

Two major SEO platforms shipped AI search visibility tools within days of each other. Semrush launched Semrush One, an integrated platform with Enterprise AIO — tracking brand appearance frequency across ChatGPT, Perplexity, Google Gemini, Copilot, and AI Overviews simultaneously. It also ships with MCP integration, allowing LLMs to query Semrush keyword data directly for automated research workflows. Ahrefs launched Brand Radar tracking 271 million prompts across AI platforms, claiming a larger prompt database than Semrush.

Two market leaders shipping the same feature class in the same week is not a coincidence. GEO tracking has moved from early-adopter tooling to standard infrastructure.

AEO & AI Search Watch

The schema data from March 2026 points in a clear direction: triple-layer structured markup (Article + ItemList + FAQPage combined) delivers 1.8x higher AI citation rates compared to Article Schema alone (source: AIVO, March 2026). And 44.2% of LLM citations pull from the first 30% of a piece of content (Growth Memo, February 2026) — meaning the opening section is doing double duty: ranking signal for Google and citation surface for AI search.

For brands without third-party review profiles on Trustpilot, G2, or Capterra: ChatGPT citation rates for verified-profile domains run 3x higher than those without (SE Ranking, November 2025). AI search is using reputation infrastructure as a credibility proxy.

What Actually Matters After This Update

The Information Gain logic demands an audit of your content against the current top results before writing. Repeating what's already there isn't just a missed opportunity — it's an active demotion signal. Write something different. That principle is now baked into the algorithm.

Core Web Vitals are no longer background hygiene. With 47% of slow sites taking ranking hits in this cycle, a full CWV audit is the highest-leverage technical action right now — fix LCP (target under 2.5s), eliminate CLS, prioritize INP for interactive pages.

The author credibility signal is now explicit. If a site doesn't surface author credentials, professional background, or verifiable expertise in the content itself and in structured author markup, ranking points are being left on the table in YMYL and competitive informational queries. The March 2026 update is a forcing function to do what quality-focused sites should have done already. Honestly, most of us knew this was coming — we just kept putting it off.

Today's Synthesis

NVIDIA expects $1 trillion in agentic AI orders by 2027. Shopify's AI shopping agent orders grew 14x year-over-year. Google's March core update is systematically demoting content that overlaps with what already ranks. These three developments span hardware, commerce, and search — but they share a single structural thesis: every major distribution system is migrating from "who pays more" to "who contributes something unique."

Start with the compute layer. Jensen Huang unveiled Vera CPU, Groq 3 LPU, and a full-stack architecture designed to make agentic AI workloads dramatically cheaper. Alibaba, ByteDance, Meta, and Oracle Cloud all signed on. Meanwhile, Chamber (YC W26) launched with data showing 30-60% of enterprise GPU capacity sits idle — the bottleneck is allocation intelligence, not silicon supply. Connect these two data points and the conclusion is stark: within 12 months, "compute is too expensive" stops being a credible product constraint for nearly any AI application.

When compute becomes abundant, the scarce resource shifts. Google's Information Gain Metric makes this explicit at the content layer. The Gemini 4.0 Semantic Filter now calculates how much your content overlaps with the existing top-100 results. Higher overlap means deeper demotion. Over 55% of sites saw ranking changes within two weeks (SEO Vendor / Search Engine Journal / Quantifimedia). Sites chasing trending topics outside their established expertise dropped up to 60%. E-E-A-T-strong ecommerce sites gained 23% visibility. The algorithm no longer rewards volume. It rewards the delta between what you publish and what already exists.

The commerce layer is running the same logic through a different mechanism. Shopify's Universal Commerce Protocol lets AI shopping agents read a merchant's full data layer — catalog, reviews, loyalty programs, pricing — and complete purchases autonomously. Finkelstein's word for it: "merit-based." Agents don't see ad budgets. They see product data quality, review authenticity, and brand signal clarity. Google's simultaneous test of Sponsored Shops — store-level branded placements evaluated holistically rather than SKU-by-SKU bidding — reinforces the same pattern. A small brand with clean, structured product data can now compete for positions that previously required outspending incumbents.

What makes this moment different from the usual "quality matters" platitude is that three distribution systems are making the shift simultaneously and mechanistically. Google's ranking algorithm literally measures information novelty. Shopify's commerce protocol literally excludes ad spend from agent decision-making. NVIDIA's compute roadmap literally commoditizes the infrastructure that used to be a competitive moat for well-funded AI companies. These aren't aspirational principles. They're engineering decisions already deployed at scale.

The talent market mirrors the same dynamic. Musk admitted xAI "was not built right" and recruited directly from Cursor to rebuild. JetBrains shut down Code With Me after Claude Code took the developer tooling crown in 8 months. An academic at Bar-Ilan wrote in Science that he might hire AI instead of graduate students. In each case, the selection criterion is the same: who can solve the specific problem right now, regardless of brand, tenure, or institutional affiliation.

There is an uncomfortable edge to merit-based systems, though. The 15% universal tariff and de minimis suspension have structurally broken the cross-border DTC model that Shein and Temu built empires on. Goldman Sachs reports nearly $1 trillion in CTA selling pressure still queued up, with this week's FOMC meeting and $1.3 trillion Triple Witching OPEX creating one of the most technically complex windows in recent memory. China's A-share ChiNext rallied 1.41% independently — institutional money is betting on AI growth even as PMI sits at 49.8, below the expansion threshold. Markets are pricing in the "quality over scale" narrative with real capital, but the macro foundation underneath that bet remains shaky.

Compute abundance, content deduplication, merit-based commerce, skill-specific talent markets. These are projections of the same force across different domains. The playbook that worked for the last decade — outspend on ads, outproduce on content, outscale on headcount — is being algorithmically penalized in every distribution channel simultaneously. What replaces it is harder and less replicable: genuine expertise, unique data, and the ability to create something an AI agent cannot simply regenerate from existing sources. That is the competitive moat for the next 12 months, and the builders who internalize it earliest will compound the advantage fastest.

Comments on "Zecheng Intel Daily | March 17, 2026 Tuesday": 0