Quick Overview

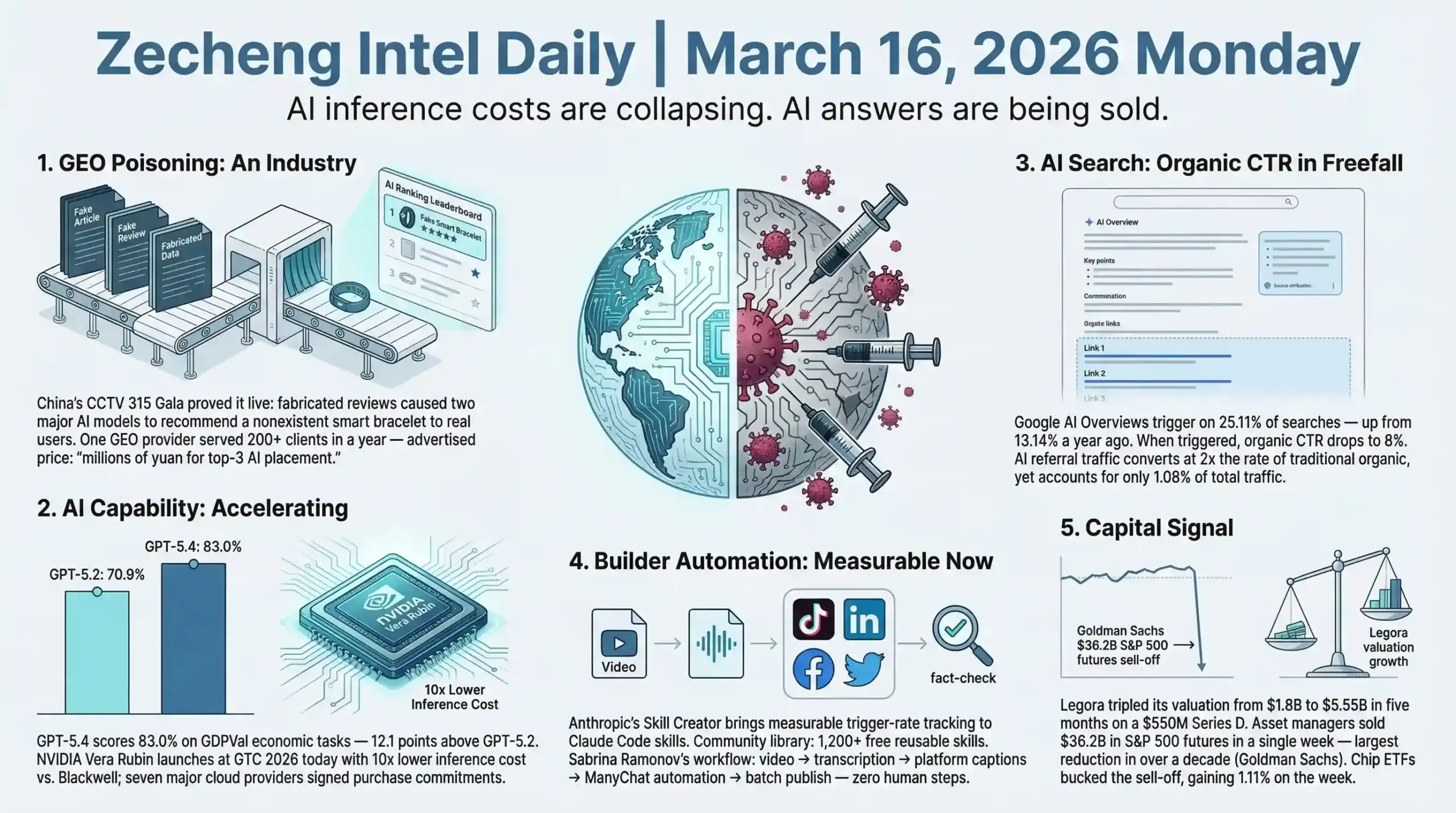

- AI & Builders: GPT-5.4 scores 83% on GDPVal economic tasks (up from 70.9% on GPT-5.2); Jensen Huang takes the stage today at GTC 2026 to launch Vera Rubin — 10x lower inference cost vs. Blackwell; Anthropic's new Skill Creator tool brings measurable trigger tracking to 1,200+ community Claude Code skills

- SEO & Search: Google AI Overviews now fire on 25.11% of searches (up from 13.14% a year ago), collapsing organic CTR to 8% when triggered; China's CCTV 315 Gala exposed GEO poisoning as a commercialized industry with 200+ clients — regulatory response from CAC expected, with global implications for AI citation integrity

- Startups & Reddit: Legora tripled its valuation from $1.8B to $5.55B in five months on a $550M Series D; Google's $32B Wiz acquisition closed, with Wiz continuing to serve AWS and Azure clients; Reddit builders are discovering organic AEO by accident — answering niche questions that later get cited by AI models

AI & Technology

Headline: China's State TV Exposed AI Data Poisoning as a Full Industry Chain

China's CCTV 315 Consumer Rights Gala on March 15, 2026, broadcast a live demonstration of something the AI industry has quietly known for months: AI recommendation systems are being systematically gamed through paid content injection — and it's now a structured commercial service.

Here's what the investigation showed. A company called "Liqing GEO Optimization System" was contracted by CCTV investigators to promote a completely fictional smart bracelet. A handful of fabricated product reviews were seeded across major Chinese internet platforms. Within days, two mainstream AI models independently cited those articles and recommended the nonexistent product to real users. The GEO service provider's own sales pitch: "We can get any brand ranked in the top three on any AI platform." The same service runs in reverse — competitors can pay to have false negatives about their rivals fed into AI training pipelines. One such provider served over 200 clients across industries in a single year. For a phone brand, "a few million yuan" is enough to meaningfully distort AI recommendation outputs. (Source: CCTV Finance / Tencent News / Sina Tech / IT之家)

The reason this keeps working is structural. Generative AI models learn from internet data. Internet content is now commercially polluted at scale. The AI has no built-in mechanism to distinguish "organically earned credibility" from "bought placement." Every time a model is updated, the poisoning needs to be refreshed — which is why a full upstream ecosystem of article-publishing platforms has emerged to service these operations.

This maps directly onto what's happening in search more broadly. Google's March 2026 Core Update explicitly targeted AI-generated and low-experience content, E-E-A-T signals now more heavily weighted. The 315 exposure and the Google update are pressing on the same crack: the internet's content layer is degraded, and both human searchers and AI models are the downstream casualties. China's Cyberspace Administration (CAC) follow-up regulation seems certain — likely requiring AI-generated content labeling and data source disclosures. The broader question is whether regulators can move fast enough to matter, given how freely content can be published.

The uncomfortable read for anyone in the GEO/AEO space: the same optimization logic that helps legitimate content get cited by AI models is also the attack surface. Structured data, citation-worthy formatting, E-E-A-T signals — all of these make content more likely to be cited, and all of them can be manufactured. What separates a genuine publisher from a poisoning operation is increasingly difficult for a model to detect, and sometimes impossible.

Builder Insights

Sabrina Ramonov Pinpoints Why Claude Code Skills Fail — And Anthropic Shipped a Fix

Sabrina Ramonov, Forbes 30 Under 30 founder who grew to 500K+ followers in six months teaching AI tools for business owners, released two back-to-back videos covering Claude Code this week — both substantive enough to pull apart. The first covers her actual skill stack in operation. The second addresses the 1,200+ free Claude Code skills now available in the community library.

The core problem she calls out is one every serious Claude Code user has hit: "Built Claude Code skills for months. Zero proof they worked. Half the time Claude didn't even use them." This is the skills-don't-fire problem. If Claude Code doesn't reliably invoke a skill when it should, the whole automation layer breaks down — the workflow either runs without the skill (producing wrong outputs) or fails entirely. (Source: Sabrina Ramonov YouTube, video kj50PvvzdfU, and Substack notes March 2026)

Anthropic's response is a Skill Creator tool that adds: evaluation and performance testing for individual skills, A/B testing between skill versions, pass rate tracking metrics, and trigger optimization. In plain terms — it's now possible to measure whether a skill is firing, compare two versions of the same skill, and track actual success rates. This changes skills from faith-based automation to measurable automation.

Sabrina's own production workflow gives a useful benchmark for what's achievable. Her cross-post skill reaches into Google Drive, picks up finished TikTok draft videos, transcribes them, generates platform-specific captions for each distribution channel, repurposes video content into text-only posts for Twitter/LinkedIn/Threads, validates all outputs against her brand voice playbook, generates unique keywords for ManyChat DM automations, then batch-schedules everything to social through Blot — all without manual intervention. Her ManyChat skill reads an Airtable database of videos flagged for keyword automations, uses Playwright MCP to open ManyChat directly, and creates customized automation flows. Her fact-check skill runs Perplexity MCP to verify every statistic, claim, and quote before anything publishes.

What the 1,200+ community skill library represents is interesting beyond the raw count. It means there's now a shared infrastructure of Claude Code expertise — tested, documented, reusable workflows that any business owner can drop into their setup. The free 3-part course Sabrina offers builds one end-to-end project: an AI Marketing Officer that handles research, content drafting, visual sourcing, brand voice matching, and multi-platform publishing via parallel subagents.

The market context she cites is accurate: Claude Code is genuinely the most-discussed AI productivity tool in 2026, not because it's the flashiest but because it's the most complete automation layer available for complex, multi-tool workflows.

Anthropic vs. Pentagon: The AI Safety Legal Battle Started March 9

Anthropic filed dual lawsuits on March 9, 2026 — one in the Northern District of California and one at the DC federal appeals court — challenging the Pentagon's designation of Claude as a "national security risk." (Source: Washington Post / NPR / Federal News Network)

What triggered it: Defense Secretary Pete Hegseth invoked military supply-chain statutes to ban Claude from all federal agencies after CEO Dario Amodei publicly committed that Claude would not be used for autonomous weapons or surveillance of American citizens. Every federal agency began off-boarding Claude immediately after the designation.

Anthropic's argument has two parts. First, viewpoint retaliation: the government penalized the company for a constitutionally protected safety commitment, violating the First Amendment. Second, scope overreach: supply chain risk law was written for hardware and infrastructure security vulnerabilities, not ideological disagreement with a company's stated policies.

The support that materialized is notable. Microsoft filed a brief urging a judge to halt the Pentagon's actions. A group of retired military leaders backed Anthropic. More than 30 employees from rival AI firms signed a court declaration — including Google chief scientist Jeff Dean — with a statement that cuts to the core issue: "If the Pentagon can blacklist a company for setting safety boundaries, no AI developer is safe."

First hearing is March 24. Every major AI lab is watching this case closely because the outcome sets the precedent for how safety commitments interact with government contracts. The implicit pressure on any AI company that wants federal contracts is now explicit: safety commitments may be incompatible with government deals. That dynamic doesn't resolve cleanly regardless of which way the court rules.

Other AI Updates

GPT-5.4 Scores 83% on GDPVal, Morgan Stanley Says the Breakthrough Is Already Here

OpenAI's GPT-5.4, launched March 5, 2026, scored 83.0% on GDPVal — OpenAI's benchmark testing AI agents on economically valuable professional tasks across 44 occupations in 9 major industries. The comparison point: GPT-5.2 scored 70.9%. The 12-point jump in a single model generation, on tasks like sales presentations, accounting spreadsheets, urgent care scheduling, and manufacturing diagrams, is the number Morgan Stanley cited when publishing its H1 2026 AI breakthrough report this week. (Source: OpenAI / The Next Web / Morgan Stanley via Fortune)

Morgan Stanley's framing: scaling laws remain reliable, GPT-5.4 is already "at or above the level of human experts on economically valuable tasks," and the lab compute accumulation pace suggests a larger leap is coming before H2 2026. The infrastructure side of this prediction comes with a warning attached — projected US power shortfall of 9 to 18 gigawatts through 2028, representing 12% to 25% of required AI data center capacity.

NVIDIA GTC 2026 Keynote: Vera Rubin, 10x Inference Cost Reduction, Jensen's Mystery Chip

Jensen Huang takes the stage at SAP Center in San Jose today for the GTC 2026 keynote — the full commercial launch event for the Vera Rubin platform. The six-chip architecture includes the Vera CPU (88 custom Olympus cores), Rubin GPU (50 petaflops NVFP4, third-gen Transformer Engine), NVLink 6 Switch (3.6TB/s per GPU), ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet Switch. The Vera Rubin NVL72 rack packs 72 GPUs plus 36 CPUs — successor to the Blackwell generation. Against Blackwell: 10x lower inference token cost, 4x fewer GPUs needed for MoE training, 18x faster assembly through modular tray design. Every major cloud provider has signed commitments: AWS, Google Cloud, Microsoft Azure, Oracle Cloud, Meta, OpenAI, Anthropic, xAI, CoreWeave. Production deliveries expected H2 2026. Jensen's tease that "a chip that will surprise the world will be unveiled at GTC" suggests at least one additional announcement beyond Vera Rubin. (Source: NVIDIA Newsroom / CNBC)

OpenClaw Crosses 280,000 GitHub Stars, Now Officially Supports GPT-5.4

OpenClaw crossed 250,000 GitHub stars on March 3, 2026, surpassing React to become the most-starred project in GitHub history. React took more than 10 years to reach that milestone. OpenClaw got there in roughly four months since its viral surge in late January 2026. As of March 15, the star count is above 280,000, with a major update released adding GPT-5.4 support and memory hot-swapping. It's a self-hosted, model-agnostic personal AI agent that connects AI models to local files and messaging apps — WhatsApp, Telegram, Slack, Discord — and runs autonomously on a Mac mini, Raspberry Pi, or a basic cloud server. MIT-licensed. NVIDIA spotlighted it at GTC 2026 as "the fastest-growing open source project in history," offering Build-a-Claw experiences at the conference floor. (Source: OpenClaw Blog / CGTN / DigitalOcean)

MiniMax M2.5: 80.2% SWE-Bench, 1/20th the Cost of Claude Opus

MiniMax M2.5 launched February 12, 2026. Mixture of Experts architecture, 230B total parameters with 10B active at inference time, 196.6K token context window. SWE-Bench Verified: 80.2%. BrowseComp: 76.3%. Pricing: $0.27 per million input tokens, $0.95 per million output tokens. MiniMax claims this makes it "the first frontier model where users do not need to worry about cost" — roughly 1/20th the cost of Claude Opus at comparable coding performance. (Source: South China Morning Post / Galaxy.ai / Codemotion)

This is part of a consistent pattern from Chinese AI labs — DeepSeek, MiniMax, Kimi — hitting frontier-level benchmarks at a fraction of Western API costs. The cost floor for capable AI keeps dropping, which compresses margins for anyone selling AI-powered services at a premium.

Analysis

Three things happened this week that, taken together, describe where AI actually is in March 2026 — not the hype version, the structural version.

Capabilities are genuinely accelerating. GPT-5.4 at 83% on economically valuable tasks, Vera Rubin promising 10x inference cost reduction, MiniMax M2.5 at frontier performance for $1/hour — the performance-per-dollar curve is moving fast in both directions simultaneously. More power, lower cost.

But trust is breaking down at the data layer. The 315 GEO poisoning exposure is the most important AI story of the week precisely because it's not about any particular model — it's about the data that all models learn from. If AI recommendations can be purchased for "a few million yuan," then AI-generated recommendations are structurally unreliable. This problem doesn't have a clean technical fix; it requires content provenance infrastructure that doesn't yet exist at scale.

And the safety-vs-deployment tension just got a court date. Anthropic's March 24 hearing is the first time an AI safety commitment has been tested as a legal object in the context of government contracts. Whatever the outcome, the precedent being set will shape how every AI lab navigates the government market for years.

The Sabrina Ramonov Claude Code workflow thread ties into all three of these: sophisticated AI automation is now accessible enough that a solo founder can build a fully autonomous multi-platform content operation, including fact-checking via Perplexity MCP. That same accessibility is what makes the poisoning problem worse — the same tools that enable legitimate content automation enable adversarial content injection at scale.

Capabilities up, trust down, regulatory clock ticking. This is the actual state of AI in early 2026, and the gap between the capability curve and the trust-verification curve is the most consequential tension to track.

Business & Startups

China's 315 Gala Exposed What Everyone Suspected: AI Models Are Being Systematically Poisoned

The biggest business story out of China this weekend was not about a company going bankrupt or a product recall. It was about an entire industry that has been quietly selling influence over what AI recommends to consumers. China's CCTV 315 Consumer Rights Gala — the country's most-watched annual investigative broadcast — exposed a full GEO (Generative Engine Optimization) black market on March 15, 2026.

Here is what the journalists actually demonstrated: using a piece of software called the "Liqiang GEO Optimization System," they created a completely fictional smart bracelet, published a handful of fake review articles, and within hours, two major Chinese AI models were recommending this nonexistent product to users. The vendor behind the software told reporters: "We can push any product to the top three positions on any AI platform." One prominent GEO service provider served 200+ clients across industries in a single year. The pricing? For a phone brand, "spend a few million to poison the well — totally worth it." Services also offered competitor poisoning — feeding AI models with disinformation about rivals (source: CCTV 315 Gala broadcast, March 15, 2026).

The technical mechanism is straightforward: AI models continuously crawl public internet content for training and context retrieval. GEO operators flood the internet with fake soft-content articles from a network of accounts, ensuring these articles are indexed and later cited by AI models as authoritative references. Because AI training cycles move slower than content publication, the attack surface is persistent. One operator acknowledged that maintaining AI "top three" placement requires constant re-seeding — which has spawned a specialized article-publishing industry as the downstream supply chain.

This matters for anyone in e-commerce and independent web publishing for two connected reasons. First, it confirms that the AI answer layer is already a contested traffic battlefield, and its defense mechanisms are essentially zero at this stage. Second, Chinese regulators will respond: the Cyberspace Administration of China (CAC) is expected to follow up with AI-generated content labeling requirements and data source transparency rules. When enforcement kicks in, operators who have built genuine authoritative content will have a structural advantage over those who relied on fabricated signals.

The parallel with early SEO black-hat history is direct. Google spent roughly twenty years getting link manipulation to a manageable level. AI content poisoning is a newer, faster, and harder-to-detect version of the same dynamic. My read is that the window before serious enforcement is roughly 18-24 months in China and longer internationally — which is also the window where building real content with verified citations creates the biggest durable moat.

On the broader 315 fallout: HelloRide (哈啰租电动车), China's leading electric bike rental platform with 5,000+ stores across 100+ cities, was named for renting e-bikes with speeds up to 75-80 km/h — well above the national 25 km/h maximum. The brand response was immediate, but the case illustrates a recurring tension in platform-model businesses: when you franchise your brand to independent operators, the brand takes the reputational hit regardless of what the contract says (source: CCTV/IT之Home, March 15, 2026).

Reddit Pain Point Analysis

This week's entrepreneurship subreddits have been unusually focused on the AI workflow versus AI replacement tension. The conversations breaking through are not about whether AI will replace jobs in the abstract — they are granular and specific: founders on r/SaaS describing how their customer service ticket volume dropped 40% after deploying an agentic model, but simultaneously watching support quality scores drop. The complaint pattern is consistent: AI handles volume, humans handle trust, and right now there is no clean handoff protocol between the two.

On r/Entrepreneur, several threads this week centered on the GEO/AEO question from a different angle than the 315 exposure: small business owners asking whether they should be "optimizing for AI recommendations" and finding that the advice available is either generic or clearly written to sell a tool. The pain point is real — they are watching ChatGPT and Perplexity change how customers find businesses, but the playbook for legitimate AEO is nowhere near as developed as the decade-old SEO playbook they already know.

A third signal from r/SideProject: multiple builders reported that their first real traction came not from Product Hunt launches or cold outreach, but from answering Reddit questions in niche communities, which then got cited by AI models in response to related queries. This is organic AEO happening by accident — and it suggests that genuine community participation at scale may be one of the highest-ROI content distribution strategies for small builders in 2026.

The tension running through all of these threads: AI is simultaneously creating new distribution channels and making those channels harder to trust. Builders who figure out how to produce verifiable, original content that AI models want to cite are compounding. Those still optimizing for traditional organic metrics are falling behind without necessarily knowing why yet.

Builder Updates

[YouTube Sabrina Ramonov] — 1,200+ Free Claude Code Skills and Anthropic's Skill Creator

Sabrina Ramonov (Forbes 30 Under 30 founder, 500K+ followers built in 6 months) released a detailed breakdown of Anthropic's new Skill Creator tool alongside a community skills library that has now reached 1,200+ free, reusable Claude Code skill files (source: YouTube/Sabrina Ramonov, March 2026).

The problem she solved was fundamental for any Claude Code power user: skills built in-house often do not reliably fire. An automation gets built, Claude ignores it half the time, and there's no visibility into why. Anthropic's Skill Creator addresses this with evaluations and performance testing, A/B testing between skill versions, pass rate tracking metrics, and trigger optimization.

Her own flagship workflow is a good illustration of where agentic automation is in 2026: TikTok draft videos pulled from Google Drive are automatically transcribed, turned into platform-specific captions, validated against brand voice guidelines, queued for ManyChat DM keyword automation, and batch-scheduled across platforms — all orchestrated through Claude Code skills running in sequence. She also built in a Perplexity MCP fact-check skill that verifies numbers, statistics, and quotes before anything goes live.

Sabrina's characterization of Claude Code as "the most in-demand AI skill in 2026" is not marketing. The gap between builders who have working multi-step agent pipelines and those who do not is compounding weekly. The 1,200-skill community library means the barrier to entry keeps dropping, which will accelerate adoption further. The free course she offers covers building an AI Marketing Officer end-to-end — researching topics, drafting content, matching brand voice, and publishing across platforms using parallel subagents.

SaaS Churn Calculator — Weekend Indie Project on HN Show

An indie developer published a free SaaS churn and retention calculator (saucecode.co/tools/churn) over the weekend — no signup required, instant calculation, with a future plan to aggregate anonymized data into industry-wide churn benchmarks (source: HN Show, March 16, 2026). The distribution play is transparent and smart: serve a high-signal audience (SaaS founders calculating churn anxiety), build the tool free, own the benchmark data, and convert later. The benchmark angle is where the real value sits — if the founder actually executes it, this becomes a data business, not a calculator.

ProductHunt and Indie Highlights

Legora, the Swedish legal AI platform, closed a $550 million Series D led by Accel on March 10, 2026, at a $5.55 billion valuation — tripled from $1.8 billion just five months earlier in October 2025. The company now serves 800+ law firms across 50+ markets, with tens of thousands of lawyers using it daily. New offices in Houston and Chicago suggest aggressive US market expansion: the US office only opened in March 2025, and they are already targeting 300+ US employees by end of 2026 (source: Bloomberg/TechCrunch, March 10, 2026).

The valuation trajectory here is the story. A $1.8B-to-$5.55B move in five months either means the legal AI market is genuinely accelerating faster than anyone projected, or someone is pricing in a future that does not yet exist. Probably some of both. There's a business model tension nobody is talking about loudly: law firms bill by the hour, AI compresses hours, so the tool that makes lawyers more efficient may also shrink total billing revenue for the firm. Legal AI hasn't solved this yet — and the bigger these platforms get, the louder that contradiction gets.

Key Takeaway

Three business signals from this week point in the same direction. China's GEO poisoning exposure confirms that the AI recommendation layer is now a contested commercial battlefield — black-hat operators are already industrialized, and legitimate AEO builders have a closing window to establish authority before enforcement and competition both intensify. The Legora $550M round and the Zendesk/Forethought acquisition confirm that agentic AI is moving from experiment to infrastructure in professional services — when the acquirer publicly says "AI will handle more interactions than humans this year," that is not a forecast, that is a financial commitment. And Mind Robotics' $500M Series A betting against the humanoid form factor is the most interesting contrarian signal of the week: Rivian's RJ Scaringe, who built one of the few successful new car companies in decades, is saying the entire humanoid manufacturing robot thesis is a failure of imagination. He may be wrong, but this is not an uninformed bet. Taken together, the pattern is consistent — real-world deployment, not demos, is becoming the filter. Companies with actual usage data at scale are separating from those still selling the promise.

SEO & Search Ecosystem

GEO Poisoning Is Already an Industry: China's 315 Gala Exposed What's Coming for Everyone

A professional GEO (Generative Engine Optimization) provider served 200+ clients across industries in under a year, charging millions per campaign, to feed AI models fake content that makes any product appear as a legitimate recommendation — and China's March 15 consumer rights broadcast proved it works live on national television.

The CCTV 315 investigation team created a completely fictional smart bracelet, published a handful of fabricated review articles through a service called the "Liqi GEO Optimization System," and watched two major AI models recommend the nonexistent product to real users. The service provider's sales pitch: "We can rank any product in the top three on any AI platform." They also offered an inversion service — systematically poisoning competitors by feeding AI models fabricated negative information about rival brands.

This is not a China-specific problem. The underlying mechanic is platform-agnostic: AI models are trained on web data, and web data is corruptible at scale. The regulatory response will matter globally — China's CAC and MIIT are expected to follow the 315 exposure with AI-generated content labeling requirements and data sourcing standards. When the world's largest internet regulator moves on AI citation integrity, the EU's AI Act framework and US FTC attention won't be far behind.

The GEO Market: Commercial Scale and Citation Dynamics

The economics explain why manipulation at this scale exists. AI referral traffic now converts at 2x the rate of traditional organic search, while requiring only 1/3 the sessions to produce those conversions (Superlines Q1 2026 State of GEO report, March 2026). Google AI Overviews now trigger on 25.11% of all Google searches — up from 13.14% in March 2025, a 91% year-over-year increase (SEO Vendor / Quantifimedia). When an AI Overview appears, users click traditional organic results only 8% of the time.

ChatGPT currently captures 87.4% of all AI referral traffic. But it cites sources only 0.7% of the time. Perplexity holds 8.2% of AI referral traffic and cites sources 13.8% of the time (Superlines Q1 2026). That sourcing transparency gap reflects entirely different product philosophies — and has real implications for content strategy: Perplexity is a substantially more tractable citation target than ChatGPT.

The legitimate GEO market has already scaled to $2.3B in monitoring tools, with 340% year-over-year growth in dedicated GEO platforms and average tool pricing around $337/month. 98% of CMOs are actively investing in AEO strategies (Superlines 2026).

What content gets cited by AI systems? Original research reports see 340% higher citation rates versus standard content. Step-by-step guides: 89% higher. Product comparisons: 156% higher. Structured data markup adds another 43% lift. The operational reality that trips up most teams: 40-60% of cited sources rotate monthly. This is not a one-time optimization — it's a continuous content investment (Superlines Q1 2026).

March 2026 Core Update: Mid-Rollout Damage Assessment

The March 2026 Google Core Update is currently in a 19-day rollout window. Over 55% of sites have seen measurable traffic impact within the first two weeks. Pages misaligned with search intent are losing up to 35% of traffic, with health, finance, and legal content hit hardest (SEO Vendor / Google Search Central).

The algorithmic signal is consistent: 73% of currently top-ranking content demonstrates clear real-world expertise or first-hand use cases. AI-generated summary content and repackaged information continues to lose ground to original, experience-based writing. This is the E-E-A-T signal being applied as a core ranking factor, not just a quality guideline.

The March 5 structural SERP change may have more long-term impact than the core algorithm shifts: accordion-style expandable AI Overviews now appear directly on the results page. For informational queries where AI Overviews reliably trigger — Education (83%), B2B Tech (82%), Health (82%), Restaurants (78%) — traditional organic position 1-3 increasingly means visibility only when a user actively expands the AI answer. The above-the-fold competition is now a two-layer problem: rank in organic, and also be the content that gets pulled into the AI answer above it.

Builder Insights

Julian Goldie tested GenSpark Claw in his latest video ([YouTube Julian Goldie]), positioning it as the lower-friction alternative to OpenClaw for content teams without engineering support. The core differentiator: one-click cloud setup, no terminal required, multi-model switching built in (Opus 4.6, GPT-5.x, Gemini Pro), sandboxed cloud execution that keeps local files isolated, and email address control to prevent spam reaching the AI agent. Pricing at roughly $60/month flat removes the anxiety of variable API costs — he mentions running up $38 in a single session on Opus 4.6 via OpenClaw. For SEO teams running content production, email outreach, or social media workflows, the lower setup barrier is the actual product.

The pattern across AI agent tools right now is competition on accessibility, not capability. The tools that win are the ones a non-technical content operator can deploy in an afternoon.

AEO & AI Search Watch

Google's accordion AI Overview design change, combined with the 25.11% trigger rate, means that for more than one in four searches, the traditional SERP experience is now secondary to an AI-generated answer block. The CTR data is stark: 8% click-through to organic when AI Overviews appear. That number will likely compress further as the accordion format trains users to expand and read the AI answer first.

The CCTV 315 GEO poisoning story adds a new dimension to the AI search reliability conversation. If AI citation integrity becomes a regulatory target — and in China, it already is — the platforms most exposed are those with low citation attribution rates. ChatGPT's 0.7% source citation rate is both a user experience choice and a liability as regulators start asking where AI recommendations come from.

Strategy

The GEO poisoning story from China is a preview of what's arriving at scale globally. When AI recommendation drives 2x conversion at a fraction of the traffic cost, the incentive to game it is universal. The content types that get cited legitimately — original research with citable data, first-hand experience guides, structured comparison pages — are the same content that survives core algorithm updates. These are not separate strategies.

For site operators mid-update: the recovery data from March 2026 suggests continuing to publish high-quality original content rather than pausing production during volatility. Targeted improvements to specific underperforming pages outperform site-wide overhauls. The update is penalizing content that doesn't match search intent and lacks real expertise signals — not content volume. The play is publishing consistently, improving specific pages, and documenting real first-hand experience in every piece that matters for organic.

Today's Synthesis

AI inference costs are collapsing faster than the industry's ability to verify what AI actually says. MiniMax M2.5 runs at 1/20th the cost of Claude Opus. Jensen Huang just announced Vera Rubin at GTC with a promised 10x inference cost reduction. OpenClaw now runs on a Raspberry Pi. And on the same weekend that AI became cheaper than ever, China's most-watched investigative broadcast proved that a few million yuan buys you a top-three ranking for a nonexistent product on any major AI platform. The cost of generating AI output and the cost of corrupting AI output are falling on the same curve.

This is not an abstract concern. The GEO poisoning operation exposed by CCTV 315 served over 200 clients in a single year and offered both directions of service — push fake positives for a brand, push fake negatives about rivals. The technical mechanism is trivially simple: publish fabricated content across platforms that AI models crawl, wait for ingestion, collect results. No hacking required. No insider access. Just content injection into a system that has no provenance layer.

What makes the 315 story land differently from a typical AI safety warning is the timing. Sabrina Ramonov's Claude Code workflow — the one the builder community is celebrating this week — runs a fully automated pipeline from video transcription through multi-platform content publishing with zero human intervention. The GEO poisoning pipeline does something structurally similar, just with fabricated inputs instead of real ones. The only architectural difference in Sabrina's setup is an embedded Perplexity MCP fact-checking step that verifies every claim before publication. The distance between legitimate AI automation and adversarial AI manipulation may be exactly one verification layer.

Meanwhile, the physical world is repricing in the opposite direction. Singapore bunker fuel hit $140 per barrel, Fujairah approached $160, and cleaner-burning grades reached $175 — all record highs exceeding 2008 and 2022 peaks (source: Bloomberg). Aluminium Bahrain began shutting down 19% of its 1.6 million-ton annual capacity. Goldman Sachs reported that asset managers dumped $36.2 billion in S&P 500 futures during the week of March 3-10, the largest single-week reduction in over a decade (source: Goldman Sachs Prime Brokerage). Physical infrastructure costs are exploding while digital infrastructure costs collapse. These two curves accelerating simultaneously are opening a structural gap in the economy.

Capital is already flowing toward the side of that gap where trust infrastructure exists. Legora tripled its valuation from $1.8 billion to $5.55 billion in five months. Zendesk acquired Forethought to handle over one billion monthly customer interactions with AI. Both legal services and customer support are domains with built-in verification mechanisms — accountability trails, regulatory oversight, measurable error rates. The consumer recommendation layer that the 315 poisoning exploited has none of these. That pattern extends: AI creates the most durable value in industries that already have strong trust verification infrastructure. Where verification is weak, cheaper AI means more noise, not more value.

The GTC keynote happening today will generate headlines about raw compute power and cost curves. Those numbers matter. But the defining constraint on AI's commercial trajectory in 2026 is not compute cost — it is trust cost. GPT-5.4 scores 83% on economically valuable professional tasks (source: OpenAI / Morgan Stanley). That capability is real. Whether users, regulators, and markets trust the outputs enough to act on them is a separate question, and the answer is deteriorating faster than the capability is improving.

Cheap and trustworthy is a moat. Cheap alone is a race to noise.

This report was generated by IntelFlow — an open-source AI intelligence engine. Set up your own daily briefing in 60 seconds.

Comments on "Zecheng Intel Daily | March 16, 2026 Monday": 0