- Five Judgments This Week

- Builders This Week: Who Built What, and What It Tells Us

- Shopify's CEO Wrote a PR That Most Engineering Teams Couldn't

- @levelsio: $87K MRR in 17 Days, Solo, AI Vibe Coding

- NanoClaw: Weekend Project to Docker Enterprise Partnership in Six Weeks

- Samuel Colvin Built a Python Interpreter for Agents Over Christmas

- Reddit Pain Points: The Questions Founders Are Actually Asking

- Builder Weekly Observations

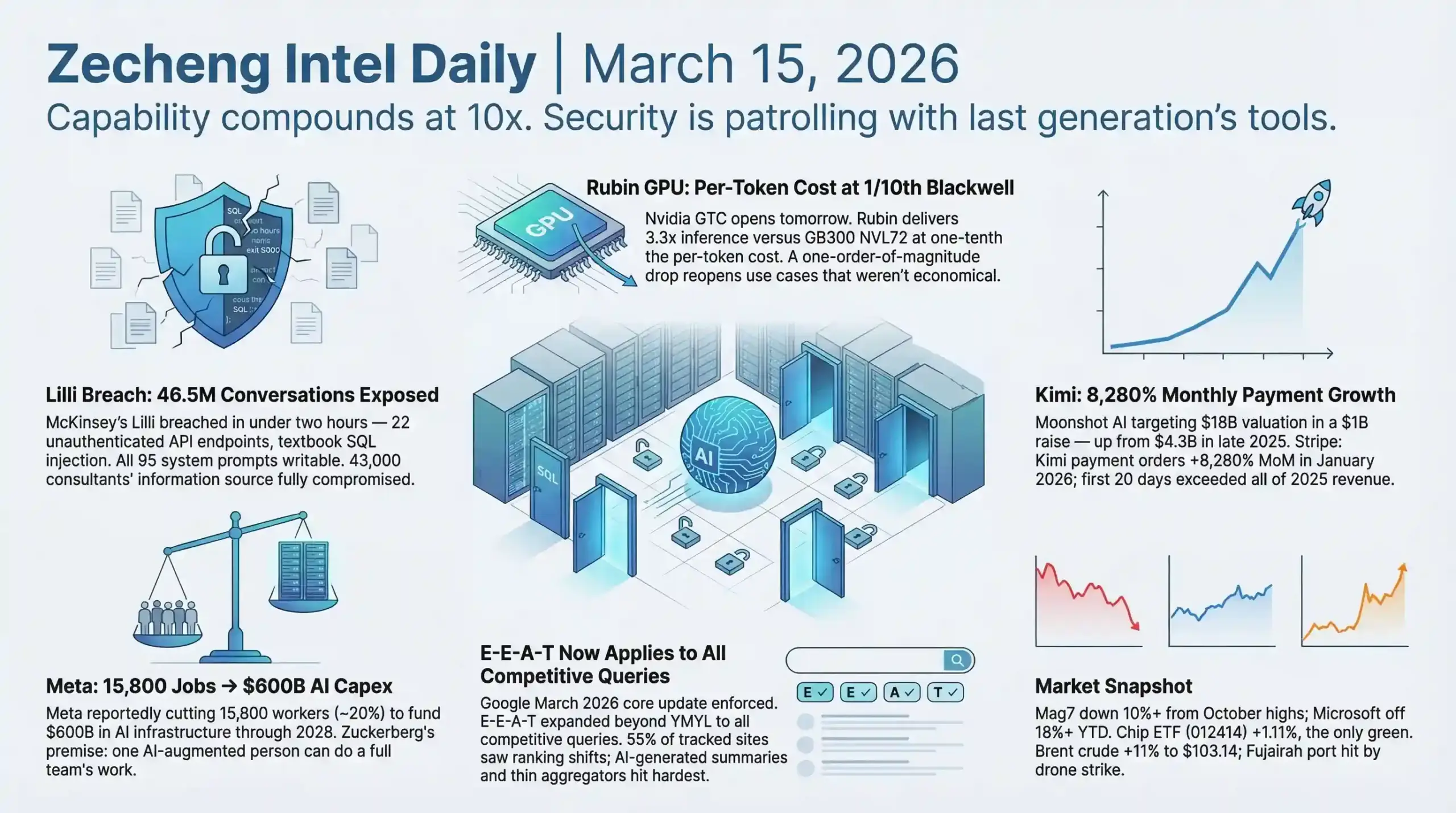

- AI: What Actually Happened in the Technology World This Week

- Enterprise AI Is Replacing People While AI Security Infrastructure Runs a Generation Behind

- McKinsey Lilli: The Breach That Shows How Fragile Enterprise AI Actually Is

- xAI Admits Its Architecture Is Wrong — After a $1.25 Trillion Valuation

- Anthropic vs. the Pentagon: The First Legal Test of AI Safety Independence

- Anthropic Code Review: AI Managing AI-Generated Code

- Yann LeCun's $1 Billion Bet Against the LLM Paradigm

- GPT-5.4, Computer Use, and the Agent Capability Ratchet

- Noise vs. Signal This Week

- Business and Startups: Where Capital Is Flowing

- The Layoff-as-AI-Investment Playbook Has Become a Template

- Oracle Q3: $553 Billion in Signed AI Contracts

- Lovable, Replit, and the AI Coding Tool Revenue Surge

- Kimi / Moonshot AI: What Explosive Growth Looks Like at the Application Layer in China

- Google Closes $32 Billion Wiz Acquisition — Its Largest Deal Ever

- SpaceX IPO and the Valuation-Reality Gap

- Business Signals This Week

- SEO and the Search Ecosystem: The Rules Changed This Week

- Google March 2026 Core Update: E-E-A-T Requirements Now Apply to All Competitive Queries

- The Ranking-Citation Decoupling Is Now Measurable

- Content Optimization for AI Agents: A New Practice Is Emerging

- AEO and GEO: The New Traffic Currency

- The Publisher Toll Is No Longer Incremental — It Is Structural

- The Agentic IDE Race and What It Means for Builder Workflows

- Geopolitics, Energy, and the Macro Backdrop

- This Week's Synthesis: What All of It Means Together

Zecheng Weekly | March 9 - 15, 2026

Five Judgments This Week

- Enterprise AI replacement is running on a quarterly cycle. AI security infrastructure is running on last generation's tools. The speed gap between them is the largest systemic risk source of Q1 2026.

- ActivTrak's 443-million-hour dataset proved that inserting AI into existing workflows makes people busier, not less busy. Enterprises responded by skipping straight to autonomous agents replacing entire workflow segments. The prerequisite for that leap — reliable, secure AI systems — was brutally exposed as missing this week.

- Individuals with AI tools are producing institutional-grade output. Institutions with trillion-dollar budgets are admitting their foundations are broken. This asymmetry is accelerating.

- The correlation between Google ranking and AI citation has collapsed from 70% to below 20%. Ranking first no longer means getting cited. Two separate optimization games are now running in parallel, with different rules, different gatekeepers, and different winners.

- Anthropic suing the Pentagon is the first legal test of whether AI companies can maintain safety positions against government coercion. The outcome will set precedent for the entire industry's relationship with institutional power.

Builders This Week: Who Built What, and What It Tells Us

Shopify's CEO Wrote a PR That Most Engineering Teams Couldn't

Tobias Lutke, CEO of a company worth over $100 billion, submitted GitHub PR #2056 against Liquid — Shopify's open-source Ruby template engine that powers every Shopify storefront. The result: 53% faster parse-and-render speed, 61% fewer memory allocations, benchmarked against real Shopify production themes (source: Simon Willison, March 13, 2026).

The method matters more than the result. Lutke used a variant of Andrej Karpathy's autoresearch system — an AI coding agent running automated experiments in a loop: edit, commit, test, benchmark, keep or discard, repeat. Approximately 120 experiment loops. 93 commits. One person. The core optimization insight was allocation-driven profiling: find where the code creates objects unnecessarily, eliminate them, defer the rest. One concrete change: replacing a StringScanner tokenizer with String#byteindex.

This is not a demo or a side project. Liquid processes every page load on every Shopify store. A CEO at a hundred-billion-dollar company used AI-assisted automated research to produce a production-grade performance optimization that most engineering teams would staff a project for. The gap between what one person can produce with AI tooling versus without it is now visible in commit histories.

Lutke's internal memo to Shopify employees (source: Fast Company) sets the organizational context: managers requesting additional headcount must first demonstrate that AI cannot do the job. That statement and Atlassian cutting 1,600 people to "self-fund AI investment" are nodes on the same logic chain. The difference: Lutke verified what AI can do before making organizational decisions. Atlassian and Meta cut first and are hoping AI fills the gap.

@levelsio: $87K MRR in 17 Days, Solo, AI Vibe Coding

Pieter Levels announced that fly.pieter.com — a free-to-play browser MMO flight simulator built with AI code generation tools — crossed $87,000 in monthly recurring revenue 17 days after launch. That annualizes to over $1 million. 320,000 players have flown in the game. The monetization is in-game advertising: branded blimps and F-16s sold as advertising inventory inside the virtual airspace, with sponsors paying $5,000 per month per placement (source: Twitter @levelsio).

Solo founder. No team. No VC. Built with AI vibe coding. The product is free — zero friction to enter — and monetization is async from user experience. Advertisers pay; users fly for free.

Whether this sustains or decays with novelty is genuinely uncertain. What is not uncertain is the existence proof: a single person with AI tools shipped a revenue-generating product at a pace that would have required a small studio three years ago.

NanoClaw: Weekend Project to Docker Enterprise Partnership in Six Weeks

Gavriel Cohen posted NanoClaw on Hacker News six weeks ago after building it over a weekend. It accumulated 22,000 GitHub stars, 4,600 forks, and 50-plus contributors. On March 13, Docker announced a formal partnership: NanoClaw AI agents will run inside Docker Sandboxes — disposable MicroVM containers providing OS-level isolation. Cohen shut down his AI marketing startup to go full-time on NanoClaw through a new company called NanoCo. Docker has 80,000 enterprise customers (source: TechCrunch, March 13).

The go-to-market path here is backwards from the traditional VC playbook. Open-source traction pulled the enterprise deal, because Docker needed the use case. Enterprise security requirements for AI agent isolation are creating pull, not just push. The weekend project to enterprise partnership pipeline is now a documented path.

Samuel Colvin Built a Python Interpreter for Agents Over Christmas

Pydantic founder Samuel Colvin spent his Christmas holiday writing 30,000 lines of Rust code with AI assistance to build Monty — a fast Python interpreter designed specifically for agent workloads (source: Latent Space podcast, March 2026).

The motivation came from four separate conversations with Anthropic employees who independently kept arriving at the same conclusion: type safety in chained tool calls is critical for reliable agents. Monty sits between two existing extremes. Simple tool calls are safe but expressively limited. Full sandboxes are powerful but operationally heavy. A venture investor Colvin spoke with estimated that roughly 70% of sandbox calls are functionally tool calls or close variants — meaning most people are using a sledgehammer where a precision tool would work better.

Colvin is three levels deeper than most builders. He is building for agents — not just with agents — solving problems that most people will not encounter until they have already deployed something at scale. The builders who identified these infrastructure needs early are building for a world where agents are the default compute substrate.

Reddit Pain Points: The Questions Founders Are Actually Asking

The most persistent question across r/SaaS, r/Entrepreneur, and r/SideProject this week: how do you get ChatGPT, Claude, Perplexity, or Gemini to recommend your product? The Marketing for Founders repository on GitHub (source: EdoStra, MIT license, actively maintained into 2026) documents this as the single most common question from founders in 2025 and into 2026.

That question signals a real gap. Traditional SEO playbooks do not translate directly into AI answer engine visibility. The founders solving this fastest are structuring content to be directly citable: concrete answers in the opening paragraph, sourced data points, paragraphs that stand alone as responses to specific queries.

A secondary pattern: cold outreach is entering a trust crisis. AI-generated email volume is collapsing signal-to-noise ratios. The founders still getting responses are doing one thing differently — referencing something specific and recent about the prospect rather than generic pain-point framing. The human signal in the first line is what gets it read.

On r/digital_marketing, the thread "what marketing advice sounds smart but almost never works?" went viral. The top-voted answer: "Post every day on every platform." The consensus underneath: platform-first content advice fails because it ignores where the actual audience lives. Tools that 10x content output do not fix a bad distribution strategy — they 10x bad content faster.

Builder Weekly Observations

Four signals from this week's builder activity point in the same direction.

Lutke's PR proves that AI-assisted research can produce production-grade infrastructure improvements at the individual level. Levels' flight sim proves that AI code generation enables solo founders to ship revenue-generating products at studio pace. NanoClaw proves that open-source traction can pull enterprise partnerships faster than traditional GTM. Colvin's Monty proves that the infrastructure layer for agents is being built by practitioners who are several levels ahead of the mainstream.

The common thread: the builders who are compounding right now share a specific discipline — they are using AI to solve concrete problems against real data, not using AI to move faster on unclear problems. Lutke ran 120 experiments against production benchmarks. Levels built a product users pulled out credit cards for. Cohen shipped something an enterprise needed. Colvin built something four separate Anthropic engineers independently said was missing. None of them started with "let me build an AI startup." They started with a problem.

AI: What Actually Happened in the Technology World This Week

Enterprise AI Is Replacing People While AI Security Infrastructure Runs a Generation Behind

ActivTrak published the largest empirical dataset on enterprise AI productivity ever assembled: 443 million hours of work activity across 163,638 employees in 1,111 organizations, tracked over three years (source: ActivTrak 2026 State of the Workplace, March 11, 2026).

The headline finding contradicts the narrative driving enterprise layoffs: AI tool adoption reached 80%, organizations now average seven or more AI tools (up from two in 2023), AI tool usage time grew eightfold — and every measured work category increased. Emails sent up 104%. Instant messaging up 145%. Time in business management tools up 94%. Collaboration surged 34%. Multitasking rose 12%. Saturday work increased 46%. Sunday work increased 58%. Focus time fell to a three-year low. AI tools are accelerating work, not reducing it.

The same week, Atlassian cut 1,600 people — 10% of its global workforce — with CEO Mike Cannon-Brookes explicitly stating the cuts are to "self-fund AI investment." Restructuring costs: $225-236 million. Meta reportedly moved toward laying off approximately 16,000 people — 20% of its workforce — to convert human labor budget into compute budget for its $600 billion AI infrastructure pipeline through 2028 (source: TechCrunch / Reuters / Fox Business, March 13-14). Block ran the identical playbook days earlier. Amazon has cut over 30,000 employees since October 2025, then required the remaining workforce to absorb that capacity using what over 1,000 employees described in an internal petition as "half-baked" AI tools that frequently produce errors requiring constant verification (source: Business Insider).

These signals are not contradictory. They are sequential phases of the same transition.

Phase one: enterprises insert AI into existing workflows as productivity boosters. The result is the ActivTrak data — more work, not less. Every AI output becomes a checkpoint requiring human verification, correction, and coordination. The tool generates; the human cleans up. Net effect: negative productivity despite increased output velocity.

Phase two: enterprises stop augmenting humans and start replacing entire workflow segments with autonomous agents. Capital is flowing into this phase at speed. Gumloop raised $50 million from Benchmark to let non-technical employees build AI agents with drag-and-drop interfaces. Rox AI hit a $1.2 billion valuation in under two years, building AI-native CRM to eliminate manual data entry, prospect research, and pipeline management. Databricks launched Genie Code with a 77.1% autonomous task success rate on real-world data engineering tasks — more than double what existing coding agents achieve (source: Databricks Newsroom). Simple AI, backed by YC, is routing inbound sales calls through AI agents with zero human involvement — Omaha Steaks, the century-old American beef brand, now sends calls from its main website number directly to AI. Letter AI closed a $40 million Series B to rebuild sales training with AI role-play simulation, with clients including Lenovo, Adobe, and Novo Nordisk.

The Atlassian detail that cuts deepest: this is the company that makes Jira and Confluence — the defining tools for human coordination in software teams. When the maker of coordination software decides AI agents can replace coordination-layer employees, that is not a cost-cutting signal. That is a structural thesis about where enterprise work is headed.

But jumping from phase one to phase two has a prerequisite that was brutally exposed this week: AI systems must be reliable and secure enough to absorb the judgment capacity being removed from the human workforce.

McKinsey Lilli: The Breach That Shows How Fragile Enterprise AI Actually Is

McKinsey's internal AI platform Lilli was fully compromised on February 28, 2026, in under two hours by an autonomous security agent built by CodeWall (source: codewall.ai / theregister.com / inc.com).

The exposure: 46.5 million consulting conversations stored in plaintext — covering strategy, M&A, and client content — alongside 728,000 confidential documents, 57,000 user accounts, 3.68 million RAG document chunks, and 95 system prompts with write access.

The entry point was embarrassingly simple. Of over 200 API endpoints, 22 required zero authentication. One concatenated JSON field names directly into SQL statements — a textbook injection vulnerability that OWASP puts in its Top 3, that university courses teach as a first example of what not to do. McKinsey's deployed security scanner, OWASP ZAP, did not catch it. An AI security agent found it through fifteen iterations of blind prompt injection-style SQL injection, using error messages to reverse-engineer the query structure.

The writable system prompts are where this gets genuinely dangerous. An attacker who overwrites the 95 system prompts controlling Lilli's outputs can poison what 43,000 McKinsey consultants see and believe. This is not data theft. It is a trust hijack. Consultants trust Lilli because it is an internal tool. Internal tools carry implicit trust that external sources do not. An adversary who controls Lilli's outputs controls the strategic recommendations flowing to Fortune 500 boardrooms — and nobody in the chain knows the source is compromised.

The timeline compounds the problem: breach on February 28, CISO acknowledged notification on March 2, public disclosure on March 9. Seven days of unknown exposure while 43,000 consultants continued querying a potentially compromised system. McKinsey stated its investigation found no evidence that client data was accessed by unauthorized parties. The question of whether the system prompts were modified during the exposure window remains unanswered.

This is not an isolated incident. IBM's 2026 X-Force Threat Intelligence Index reported that vulnerability exploitation became the leading cause of attacks, accounting for 40% of incidents observed — a 44% increase driven by missing authentication controls and AI-enabled vulnerability discovery (source: IBM Newsroom, February 2026). PoisonedRAG research quantified an even more subtle attack surface: inject approximately five engineered documents into a million-document corpus — 0.0002% of the total — and achieve 97% attack success on targeted queries, because the malicious documents score higher cosine similarity to the target query than legitimate documents (source: USENIX Security 2025). The attack happens at the retrieval layer, the part of AI systems that is least audited and least understood by most deployment teams.

GitHub saw a parallel attack this week: prompt injection embedded in Issue titles triggered AI triage bots to execute hidden commands, compromising approximately 4,000 developer machines. The Claude Code terraform destroy incident — where the AI agent deleted 1.94 million rows of production student data by executing correct logic on incomplete inputs — is another data point in the same pattern. AI agents in the "read external input, execute action" architecture turn any unverified input into a potential attack surface.

xAI Admits Its Architecture Is Wrong — After a $1.25 Trillion Valuation

Elon Musk publicly acknowledged that xAI "wasn't built right" and is being rebuilt from the foundations up (source: CNBC / Bloomberg / TechCrunch, March 13, 2026).

Of the 12 co-founders who started xAI in 2023, only two remain: Manuel Kroiss and Ross Nordeen. The departures include the technical core — Guodong Zhang, Zihang Dai, Toby Pohlen, Jimmy Ba, Tony Wu, Greg Yang. The flagship AI agent project Macrohard is dead. Tesla absorbed it, renamed it "Digital Optimus," and scrapped the technical approach entirely — from screenshot-based control to real-time video control.

Six weeks ago, SpaceX and xAI merged at a $1.25 trillion combined valuation. The announcement was full of momentum. Six weeks later: the architecture is wrong, the founding team is gone, the flagship product is scrapped.

Musk is now recruiting from Cursor AI's coding startup and reaching back out to candidates who previously declined. He has said xAI expects to catch competitors by mid-year. Meanwhile, Nvidia GTC opens tomorrow. Jensen Huang's keynote will feature the Rubin GPU — 288GB HBM4 memory, inference performance 3.3x the GB300 NVL72, bandwidth exceeding 3 TB/s, per-token cost one-tenth of Blackwell (source: nvidia.com). Groq's founding team, including CEO Jonathan Ross, has joined Nvidia to advance inference architecture. The competition is playing the next game while xAI is rebuilding the foundation for the current one.

The sequence matters: the valuation was given before the technical problems were acknowledged. When capital heat is high enough, technical readiness gets overlooked — and the correction happens later, at higher cost, in public.

Anthropic vs. the Pentagon: The First Legal Test of AI Safety Independence

Anthropic filed suit against the Trump administration on March 9 after the Department of Defense designated it a "national security supply chain risk" — the first American company ever publicly named under this designation, a tool traditionally reserved for foreign adversaries (source: Axios / CNBC / CNN).

The underlying dispute: during contract renegotiations, Anthropic sought written guarantees that Claude models would not be used for fully autonomous lethal weapons systems or domestic mass surveillance. The DoD's position: unrestricted access for all lawful purposes. When talks broke down, the Pentagon used the supply chain risk designation as a commercial weapon. Anthropic estimates the designation could cost "hundreds of millions, or even multiple billions, of dollars in lost revenue" for 2026. OpenAI moved immediately to fill the contract gap.

The legal challenge runs three tracks: First Amendment (punishing a company for publicly stating safety positions is viewpoint discrimination), Fifth Amendment due process (severe commercial penalties without meaningful opportunity to contest), and Administrative Procedure Act (the designation was arbitrary and unsupported by evidence). Reuters-sourced legal experts assessed Anthropic "appears to have a strong case." Microsoft filed an amicus brief on March 10 seeking a temporary restraining order.

Dwarkesh Patel's analysis cuts to the structural stakes: within 20 years, AI systems will likely power most military, government, and private sector operations. The cost of running surveillance infrastructure drops dramatically as inference gets cheaper. Real-time monitoring of entire populations, once economically prohibitive, becomes feasible. The question of who determines AI alignment is one of the most consequential of this decade — and it is being litigated right now.

What makes this structurally different from typical tech-government friction is the mechanism. This is not regulation, legislation, or a fine. The DoD used procurement power and supply chain designations to punish a domestic AI company for its policy positions. If this designation stands, every AI company with government contracts understands what compliance looks like: safety opinions that conflict with government demands are a liability.

Anthropic Code Review: AI Managing AI-Generated Code

Anthropic released a multi-agent Code Review system for Claude Code this week. The internal data point that motivated the build: engineer code output grew 200% in the last year at Anthropic. The bottleneck shifted from writing code to verifying code. Before Code Review, only 16% of PRs received substantive review comments. After deployment: 54%. On large PRs with 1,000-plus lines, 84% trigger findings averaging 7.5 issues per review. Cost: $15-25 per PR, averaging 20 minutes (source: Anthropic).

AI is now managing AI-generated code. The toolchain is closing the loop from generation to verification. Anthropic is not competing at the API provider level anymore — it is embedding into engineering workflows at a depth that makes displacement nearly impossible. Code generation is a feature. Code lifecycle management is infrastructure.

Yann LeCun's $1 Billion Bet Against the LLM Paradigm

AMI Labs closed a $1.03 billion seed round at a $3.5 billion pre-money valuation — Europe's largest seed deal ever, raised four months after founding (source: multiple outlets, March 10, 2026). Turing Award winner Yann LeCun, who left Meta, serves as executive chairman.

The thesis is specific: large language models have a structural ceiling because they learn from text — human descriptions of the world — rather than from the world itself. AMI Labs is building around JEPA (Joint Embedding Predictive Architecture), designed to learn predictive world representations from raw sensory input rather than language tokens.

CEO Alexandre LeBrun offered a quote that functions as both self-awareness and warning: "In six months, every company will call itself a world model to raise funding." Whether JEPA actually cracks the generalization problem is unknown. What is known is that top-tier capital is no longer treating transformer-based LLMs as the terminal architecture. Serious money is hedging on what comes after.

GPT-5.4, Computer Use, and the Agent Capability Ratchet

OpenAI shipped GPT-5.4 on March 5 with native computer-use capabilities baked into the general-purpose model, a one-million-token context window via API, and a tool search mechanism that cuts token costs by 47% in tool-heavy workflows (source: OpenAI / VentureBeat). Computer use is moving from experimental demo to baseline expectation. Weekly active ChatGPT users are at approximately 920 million, targeting 1 billion.

The Computer Use API is the capability to watch most closely. Desktop-controlling AI operates in a fundamentally different category than API-calling AI — the application ceiling is substantially higher, and the security implications are proportionally larger given what just happened to McKinsey Lilli.

Noise vs. Signal This Week

Signal: The ActivTrak data is the most important enterprise AI finding of Q1. 443 million hours of tracked work, 163,638 employees, every work category increased. This is not a survey or a projection — it is behavioral measurement at unprecedented scale. Every enterprise AI strategy that does not account for the verification overhead AI creates is building on incomplete assumptions.

Signal: McKinsey Lilli is not just a security incident. It is a structural demonstration that enterprise AI security posture has not kept pace with deployment speed. The standard tools missed what an autonomous AI agent found in two hours. The defenders are running one generation behind the attackers.

Signal: Anthropic's lawsuit will set precedent for whether AI companies can maintain independent safety standards when the most powerful buyer in the world disagrees. This is not about Anthropic specifically. It is about the governance architecture for AI's relationship with institutional power.

Signal: The Anthropic Institute — launched March 11, headed by co-founder Jack Clark — combines three existing teams (Frontier Red Team, Societal Impacts, Economic Research) into a dedicated body to study what AI is actually doing to employment, society, and governance. Hiring Matt Botvinick from Google DeepMind and Zoë Hitzig from OpenAI. Anthropic is running a dual track: monetizing Claude Code aggressively while building institutional credibility on safety research. These are not in tension — regulatory trust is a long-duration asset, and building it proactively is cheaper than defending against it reactively.

Noise: xAI's admission of architectural failure is attention-grabbing but not surprising. The underlying lesson — that capital cannot shortcut the work of building systems that hold together — has been demonstrated repeatedly. What makes it news is the scale of the valuation that preceded the admission.

Business and Startups: Where Capital Is Flowing

The Layoff-as-AI-Investment Playbook Has Become a Template

Atlassian, Block, and now reportedly Meta have all used virtually identical language: cut headcount to self-fund AI investment. All three are profitable. None are in crisis. The playbook is now a reusable narrative template — boards approve it, CFOs defend it, Wall Street rewards it.

The underlying assumption doing the heavy lifting: AI makes each remaining employee more productive, so fewer people can produce equivalent or greater output. If that assumption holds — and ActivTrak's data says the current generation of AI tools actually increases workload rather than decreasing it — then the companies cutting first are betting on phase-two autonomous agents arriving before the organizational damage from phase-one overload becomes irreversible.

Stanford's data provides the employment-side evidence (source: Stanford Digital Economy Lab / TIME, March 2026). Analysis of ADP payroll records found that software developers aged 22-25 hold 20% fewer jobs than at the 2022 peak. Call center customer service positions are down approximately 15%. Erik Brynjolfsson's research adds the critical distinction: workers using AI to automate existing tasks are seeing job erosion; workers using AI to acquire new capabilities are seeing employment growth. The variable is not whether you use AI. It is whether AI is replacing your judgment or extending it.

The YC demos this week — Simple AI eliminating inbound call center labor, Letter AI compressing sales training cycles — are commercial implementations of the structural shift this data describes. The market is not waiting for academic consensus on AI employment effects. It is already acting on the thesis.

Oracle Q3: $553 Billion in Signed AI Contracts

Oracle reported Q3 total revenue of $17.19 billion, up 22% YoY. Cloud infrastructure revenue hit $4.888 billion, up 84%. The number that matters most: Remaining Performance Obligations reached $553 billion, up 325% YoY (source: Oracle Q3 earnings). RPO represents contracted future revenue — money already committed by enterprise customers. This is not a projection. It is signed demand.

Within that total, multi-cloud database revenue grew 531% and AI infrastructure revenue grew 243%. These two segments are running far ahead of the overall cloud growth rate, which means AI-specific workloads are disproportionately driving Oracle's position.

The structural caveat: Oracle is sitting on over $100 billion in debt financing a data center buildout, with free cash flow now negative. OpenAI has paused expansion of its Stargate partnership at Oracle's Abilene facility because Oracle is installing older-generation GPUs. The chip iteration cycle is faster than the construction cycle. By the time facilities are operational, the hardware inside them can already be a generation behind what frontier workloads demand.

Lovable, Replit, and the AI Coding Tool Revenue Surge

Lovable crossed $400 million ARR in February with 146 employees — roughly $2.74 million in ARR per employee. The growth trajectory: $100M eight months after launch, $200M in November, $300M in January, $400M in February. Founders Anton Osika and Fabian Hedin have pledged 50% of their personal wealth to AI safety causes (source: Lovable, December 2025).

Replit announced a $400 million Series D on the same day, pushing valuation from $3 billion to $9 billion in six months. Revenue: $240 million for 2025, targeting $1 billion ARR by year-end, with over 150,000 paying enterprise customers.

Both companies operate on the same thesis — software creation without requiring engineering skills — but neither has disclosed gross margins. Inference costs scale with usage. High ARR growth against an unknown cost base is the honest profile of both businesses right now. The durability question does not disappear because the growth rate is impressive.

Kimi / Moonshot AI: What Explosive Growth Looks Like at the Application Layer in China

Moonshot AI is seeking $1 billion in new funding at an $18 billion valuation — more than quadrupling from $4.3 billion in late 2025 (source: Bloomberg, March 14, 2026). The underlying data: January 2026 individual user payment orders grew 8,280% month-over-month. Revenue in the first 20 days of 2026 surpassed all of 2025. Alibaba and Tencent both increased their stakes.

This is genuine product-market fit at the application layer — not a gradual adoption curve, an inflection point. Growth at this velocity also accumulates security debt at proportional speed. The mobile internet era taught us that security incidents lag user growth by 12 to 18 months. AI application growth is running several multiples faster than mobile ever did. The window for security infrastructure to catch up is correspondingly shorter.

Google Closes $32 Billion Wiz Acquisition — Its Largest Deal Ever

Google officially completed its acquisition of Wiz on March 11 for $32 billion in cash. The deal took nearly a year to clear regulators: US FTC approval in November 2025, EU clearance in February 2026 (source: multiple outlets).

The backstory matters: Wiz CEO Assaf Rappaport rejected Google's initial $23 billion offer in 2024, publicly stating the company would be worth more. He was right. The final price reflects how much the enterprise cloud security market moved in twelve months.

The strategic thesis is multi-cloud security. Enterprise customers run workloads simultaneously across Google Cloud, AWS, Azure, and Oracle Cloud — they need a security layer spanning all of them. Google committed publicly that Wiz will maintain its independent brand and continue supporting all major clouds. That commitment to openness is a strategic concession, but it also means the acquisition is not primarily about locking customers into Google Cloud. It is about owning the security entry point for the entire multi-cloud enterprise market.

SpaceX IPO and the Valuation-Reality Gap

SpaceX is targeting a $1.75 trillion valuation for an IPO as early as June 2026, with a $50 billion primary raise (source: Bloomberg, March 11). Bloomberg flagged a growing "murky deals" problem — wealthy investors accessing pre-IPO shares through special purpose vehicles, with questions about fair distribution. The financials present a tension: $15 billion in 2025 revenue alongside a reported $2.4 billion GAAP loss in the first nine months. The gap between narrative valuation and accounting reality will define the story as the listing approaches.

Business Signals This Week

The Perplexity-Amazon ruling from earlier in March continues to reverberate. Judge Chesney's distinction — user consent and platform authorization are legally separate — applies to every AI agent product that operates inside third-party platforms. The Agentic Commerce thesis is not dead, but its viable paths just narrowed to official platform APIs or operating entirely outside logged-in environments.

Adobe settled with the DOJ for $150 million over subscription dark patterns — $75 million in penalties, $75 million in consumer credits (source: DOJ press release). The case ran from June 2024 through March 2026. Annual billing with complex cancellation flows is no longer a gray area for subscription businesses.

Digg collapsed within two months of reopening. CEO Justin Mezzell was unusually candid: within hours of the public beta, AI-powered spam bots overwhelmed every defense. "When you cannot trust that the votes, comments, and interactions you see are real, you've lost the foundation of a community platform." Historical domain authority is no longer a moat — it is a targeting signal. The infrastructure cost of AI-powered spam has dropped to near zero. Any dormant platform with accumulated link equity will face coordinated bot pressure within hours of reactivation.

February 2026 saw a record $189 billion in global venture capital, with AI startups capturing $171 billion — 90% of the total (source: TechCrunch / Crunchbase). The concentration was extreme: OpenAI's $110 billion raise, Anthropic's $30 billion Series G at $380 billion valuation, and Waymo's $16 billion raise accounted for the vast majority. The capital is real. The question of whether it is being deployed into architectures that will hold together — as xAI just demonstrated they may not — remains open.

SEO and the Search Ecosystem: The Rules Changed This Week

Google March 2026 Core Update: E-E-A-T Requirements Now Apply to All Competitive Queries

The most structural change in this update is being underreported: E-E-A-T requirements have expanded beyond YMYL categories to all competitive queries (source: SEO Vendor, March 2026). The December 2025 policy expansion is now fully enforced. Tech blogs, educational platforms, e-commerce category pages, software review sites — all scored against the same framework that previously only applied to health, finance, and legal content.

Impact data: 55% of tracked sites experienced clear ranking shifts within two weeks. Among sites that moved up, 73% of top-ranking content featured demonstrable real-world experience or specific use cases. Sites that dropped: AI-generated summaries, aggregated content, and parasitic SEO — thin content built by recombining others' work with minimal original contribution. The sharpest ranking changes occurred around day eight of the 19-day rollout window.

Google's first-ever Discover Core Update concluded in late February. Discover has historically operated without dedicated core updates. This one specifically rewarded topically focused, deeply original content and penalized broad-format, sensationalist posts. For publishers running aggregation-style content strategies across Discover, the impact is more immediate than search-side changes.

The Ranking-Citation Decoupling Is Now Measurable

Pages ranking in Google's top 10 are now cited by AI Overviews only 38% of the time, down from 76% — a direct halving (source: ALM Corp, March 2026). The new citation leaders: Wikipedia at approximately 45% and Reddit at approximately 30% of all AI Overview references.

Google's AI Mode now references its own properties — YouTube, Maps, Knowledge Graph — in 17.42% of answers, up from 5.7% in June 2025 (source: Search Engine Land). The search engine is building a closed information loop. Third-party content optimized for Google's ranking algorithm now competes against Google's own assets in a game where Google controls the rules.

The Define Media Group portfolio analysis across 64 publisher websites found total search clicks fell 42% from the pre-AI Overviews baseline. Breaking news content on those same sites grew 103%. Google Discover traffic climbed 30%. For the first time in the dataset, Discover and Web Search are driving roughly equal traffic — a structural shift that would have seemed implausible two years ago.

The mechanism is deliberate. AI Overviews appear on only 15% of news queries versus 45% or more for informational queries. Google is making a conscious call: time-sensitive content gets links; evergreen content gets summarized.

Content Optimization for AI Agents: A New Practice Is Emerging

Sentry's documentation (source: cra.mr) provides the clearest implementation model. Across three properties, they serve differentiated content based on whether the requester is a human browser or an AI agent.

Documentation site: stripped-down Markdown instead of HTML for agent traffic — no navigation bars, no JavaScript rendering requirements. Main site: headless bot requests redirected away from authentication walls toward machine-readable interfaces — MCP servers, CLIs, direct APIs. Warden property: complete bootstrap information embedded directly in the response body so agents can self-configure without multiple round-trips.

The technical mechanism: Accept: text/markdown request headers identify agent traffic and trigger differentiated content delivery. This is content negotiation at a new layer — one response path for humans, a separate one for machines. Early-stage practice, but the directional logic is sound: AI agents cannot execute JavaScript, get blocked by authentication walls, and parse HTML inefficiently compared to structured text.

AEO and GEO: The New Traffic Currency

The citation economics are concrete: brands that appear in AI Overviews earn 35% more organic clicks and 91% more paid clicks compared to brands absent from AI summaries (source: Conductor, 2026). ChatGPT drives 87.4% of all AI referral traffic across industries. AI referral traffic comprises 1.08% of total website visits, growing approximately 1% per month.

GEO content freshness follows a measurable decay curve. New content receives its first AI citation within 3-5 business days. Content not updated in more than 14 days drops in citation priority. Content older than 90 days without significant updates sees citation volume fall sharply (source: Search Engine Land / Go Fish Digital). For any page earning meaningful AI referral traffic, a bi-weekly refresh cycle is the maintenance floor.

Over The Top SEO launched the industry's first full-scale GEO department — 450+ AI-optimized pages already deployed and indexed. Their CEO's framing: "The companies that will dominate the next decade aren't just ranking on Google. They're training AI to recommend them." The llms.txt protocol, which allows site owners to instruct AI crawlers explicitly, has adoption below 1% globally. That is an open lane.

ChatGPT's free and premium tiers now search the web differently. Free-tier GPT-5.3 Instant primarily summarizes from the first search result. Premium tier cross-references multiple sources with explicit hallucination reduction. Same query, fundamentally different information epistemology based on tier. Content that ranks first and reads cleanly captures free-tier citations. Content with data depth and cross-referenced claims captures premium-tier citations from higher-intent users.

The Publisher Toll Is No Longer Incremental — It Is Structural

Digital Trends saw monthly traffic collapse from 8.005 million clicks to 264,000 — a 97% decline (source: position.digital, 2026). Media executives globally project a 43% drop in search referral traffic within three years (source: Reuters Institute, January 2026). The zero-click rate directly attributable to AI Overviews sits at 72% (source: Search Engine Land / Seer Interactive).

The B2B picture is equally stark. KEO Marketing research covering 2024-2025 found 73% of B2B websites experienced significant organic traffic losses, averaging 34% YoY decline. Across 42 tracked B2B sites, the pattern shows impressions growing 31% YoY while organic clicks dropped 18% and CTR fell 22%. The industry has started calling this the "crocodile mouth effect" — one curve going up, one going down, jaws widening.

The important counterpoint from the same dataset: AI-driven sessions converted at 14.2% versus 2.8% for traditional organic traffic. The volume story is bad. The intent and conversion story is getting stronger. What is dying is low-intent, top-of-funnel informational traffic. What is becoming more valuable is the smaller set of users who still click through — they are further down the funnel.

The operational model that survives is: original expertise, updated regularly, structured for AI extraction from the first paragraph. "Publish and forget" is no longer viable as a content strategy. The sites that will hold visibility through 2026 are the ones building authority that AI engines can verify — not keyword density that ranking algorithms reward.

The Agentic IDE Race and What It Means for Builder Workflows

Andrej Karpathy posted a single tweet asking where the "agentic IDE" is. Within hours, JetBrains announced Air — rebuilt from the bones of Fleet, its previously abandoned developer tool — as an agentic-first environment that can delegate tasks to multiple AI agents running concurrently. Current preview is macOS-only. Multi-model support includes OpenAI Codex, Anthropic Claude Agent, Google Gemini CLI, and JetBrains' own Junie (source: JetBrains, March 2026).

The deeper architecture play is the Agent Client Protocol (ACP) — a vendor-neutral communication standard co-sponsored by Zed and JetBrains. ACP decouples agents from specific editors: any compliant agent works in any compliant editor. If ACP achieves adoption, the IDE market's competitive dynamics change entirely. Differentiation shifts from "which models you support" to UX, reliability, and workflow design.

The IDE market is fragmenting faster than most predicted. Cursor showed that a focused, AI-first editor could take meaningful share from incumbents. JetBrains is responding not just with a new tool but with a protocol designed to make agent-editor relationships modular. For builders choosing tools: the underlying protocol bet may matter more than the editor choice.

Geopolitics, Energy, and the Macro Backdrop

Brent crude closed the week at $103.14 per barrel, up 11% for the week. The Strait of Hormuz has been effectively closed for over two weeks. The Fujairah port in the UAE was struck by drone attacks, extending energy conflict to commercial infrastructure along the Arabian coast (source: Bloomberg). Saudi Arabia has cut daily production by approximately 2 million barrels to 8 million barrels. IEA confirmed Gulf producer cuts have exceeded 10 million barrels in total.

The Mag7 tech index confirmed a correction — down over 10% from highs. S&P 500 fell 1.6% for the week to 6,632.19. Microsoft (MSFT) is down over 18% year-to-date. Tesla (TSLA) dropped 7.58% for the week and 28.76% year-to-date. Meta (META) fell 3.83% on Friday alone.

The $1.8 trillion private credit market is showing stress. Deutsche Bank disclosed approximately $30 billion in exposure, its stock falling 6.1%. Rate cut expectations for 2026 have collapsed to near zero.

Morgan Stanley's research note adds a physical constraint: their Intelligence Factory model projects a US power shortfall of 9-18 gigawatts through 2028 — a 12-25% deficit (source: Morgan Stanley / Fortune). The bottleneck in AI infrastructure has shifted from compute to electricity. Developers are already converting Bitcoin mining operations into AI compute centers and deploying fuel cells. Companies with sound architecture still face a hard physical ceiling that fundraising cannot immediately solve.

On the regulatory side, EU lawmakers struck a political deal on March 11 to amend the AI Act with an explicit prohibition on non-consensual intimate AI-generated images — direct fallout from the Grok deepfake scandal in December 2025 (source: The Next Web / European Council). The Council agreed position on March 13. Discussions and negotiations will likely take about a year before implementation. This is the first concrete regulatory response to AI-generated content that targets specific harm categories rather than broad capability restrictions.

The energy dynamic feeds directly into the layoff calculus. Cost pressure on enterprises does not slow AI adoption — it accelerates the shift from phase one (AI tools alongside humans) to phase two (AI agents replacing workflow segments). Every dollar saved on headcount matters more when capital costs are rising and energy prices are spiking. The macro environment is an accelerator, not a brake.

Next week's focal point: Nvidia GTC 2026, March 16-19. Jensen Huang's keynote on Monday will feature the Rubin GPU architecture and a chip reveal he has teased will "shock the world." Reported speculation points to a fusion of Groq's dataflow architecture for faster token generation. The Feynman architecture (2028 roadmap, TSMC 1.6nm process) may also be previewed. Whatever ships at Rubin pricing — per-token cost one-tenth of Blackwell — reopens use cases that were not viable before, not incrementally but categorically.

This Week's Synthesis: What All of It Means Together

Start with the three numbers that define the week.

443 million hours of tracked work across 163,638 employees — and AI tools increased workload in every single category measured (source: ActivTrak). 46.5 million consulting conversations exposed in plaintext because a system serving 43,000 people at the world's most prestigious consulting firm shipped with unauthenticated API endpoints and SQL injection vulnerabilities (source: CodeWall / The Register). 10 of 12 co-founders gone from a company that merged at $1.25 trillion six weeks ago (source: TechCrunch / CNBC).

These three data points describe the same structural reality from different angles.

Enterprises are running a massive substitution trade: fewer humans, more AI systems, bigger blast radius per system. The ActivTrak data proves that the first attempt — inserting AI into existing workflows — has failed at the productivity level. It made people busier, not more productive. The corporate response is not to fix the implementation but to skip ahead to autonomous agents that replace entire workflow segments. Atlassian, Meta, Block, Amazon — they are all making the same bet: if augmenting humans with AI tools creates more work, remove the humans and let agents run the process end to end.

That bet requires a prerequisite: the AI systems absorbing these responsibilities must be reliable enough to trust. McKinsey Lilli shows they are not. The entry point was a vulnerability that university courses teach as a canonical example of what not to build. The standard security scanner deployed at McKinsey — a firm that advises other companies on technology strategy — did not catch it. An AI security agent found it in under two hours. The defenders' tooling is running a generation behind the attackers' tooling.

The writable system prompts are the detail that makes this a systemic concern rather than an anecdote. An attacker who controls what Lilli outputs controls the information substrate for 43,000 consultants making strategic recommendations to the world's largest companies. Consultants trust internal tools implicitly. Clients trust consultants because they are McKinsey. Boards trust McKinsey because it is the industry standard. Poisoning the AI at the top of that trust chain propagates downstream through multiple layers, and the contamination is nearly impossible to detect because internal tools do not receive the same scrutiny as external sources.

The xAI admission adds a different dimension. A company valued at $1.25 trillion — combined with SpaceX — publicly acknowledged its architecture was wrong, with nearly its entire founding technical team gone. The flagship agent product was scrapped. The lesson is not that Musk failed at AI. The lesson is that capital momentum and technical readiness can diverge dramatically, and the reckoning comes after the valuation, not before.

Now set that against what Lutke, Levels, and Cohen demonstrated in the same week. One person with AI tools and a production codebase produced a 53% performance improvement. One person with AI vibe coding launched a million-dollar ARR product in 17 days. One person's weekend project pulled a Docker enterprise partnership in six weeks.

AI is amplifying individual leverage and amplifying institutional fragility simultaneously. Individuals have no coordination costs, no historical technical debt, no organizational politics. AI's leverage ratio acts directly on output. Institutions have all of those frictions, and adding AI into the mix does not eliminate them — it adds new security, audit, and permission management requirements that make the friction heavier.

This asymmetry will continue to widen. As AI tools get cheaper and more capable — Nvidia's Rubin GPU drops per-token inference cost to one-tenth of Blackwell — the individual leverage curve accelerates. If institutions do not solve the security and architecture problems exposed this week, every additional layer of AI deployment adds a layer of fragility.

Anthropic's lawsuit against the Pentagon is the governance dimension of the same structural tension. The technology is powerful enough that the most consequential buyer in the world is willing to use procurement blacklisting to coerce an AI company into abandoning its safety commitments. The legal outcome will determine whether AI companies can maintain independent safety standards or whether institutional power ultimately dictates how AI systems are deployed. That question will define the next decade of AI governance — and it is being decided right now, in a federal courtroom, while McKinsey is patching unauthenticated API endpoints.

The search ecosystem reflects the same pattern at a different altitude. The correlation between Google ranking and AI citation collapsed from 70% to below 20%. Two optimization games — search ranking and AI engine citation — are now running in parallel with different rules. Google's own AI Mode is building a closed information loop, citing its own properties at 3x the rate of nine months ago. Publishers who spent a decade learning to satisfy Google's algorithm face an additional layer with different rules, controlled by different companies and by Google's own competing interests. The search traffic distribution system that the content economy was built on is being rewritten in real time.

Capability is compounding at 10x cost reduction per GPU generation. Security infrastructure is patrolling with last generation's tools. The labor market is restructuring on a quarterly cycle. The speed differential between these three lines is the single largest source of systemic risk over the next twelve months.

My read: within the next 12 months, there will be an enterprise AI security incident larger than McKinsey Lilli — because deployment speed is accelerating while security investment is not keeping pace. When that happens, the "replace humans with AI" narrative will face its first genuine trust crisis. The organizations that invested early in security infrastructure — not as an afterthought, but as a first-class engineering priority — will capture the trust premium at that inflection point. The ones that moved fast and patched later will discover, at expensive scale, that the foundation matters more than what sits on top of it.

Lutke writing one PR that outperformed entire engineering teams. Levels shipping a million-dollar product solo. McKinsey's $50-billion-revenue consulting operation getting breached through a textbook SQL injection. xAI admitting its architecture is broken six weeks after a trillion-dollar merger. The common thread: AI makes the competent more dangerous and the complacent more exposed. The gap is widening, and the middle is disappearing.

The organizations building security alongside capability, verifying before deploying, measuring against real data rather than narrative momentum — they are accumulating structural advantage. The ones moving fast and patching later will discover, at expensive scale, that the foundation matters more than what sits on top of it.

Comments on "Companies Are Firing People Faster Than AI Systems Can Be Trusted — The Most Dangerous Gap in 2026": 0