Quick Overview

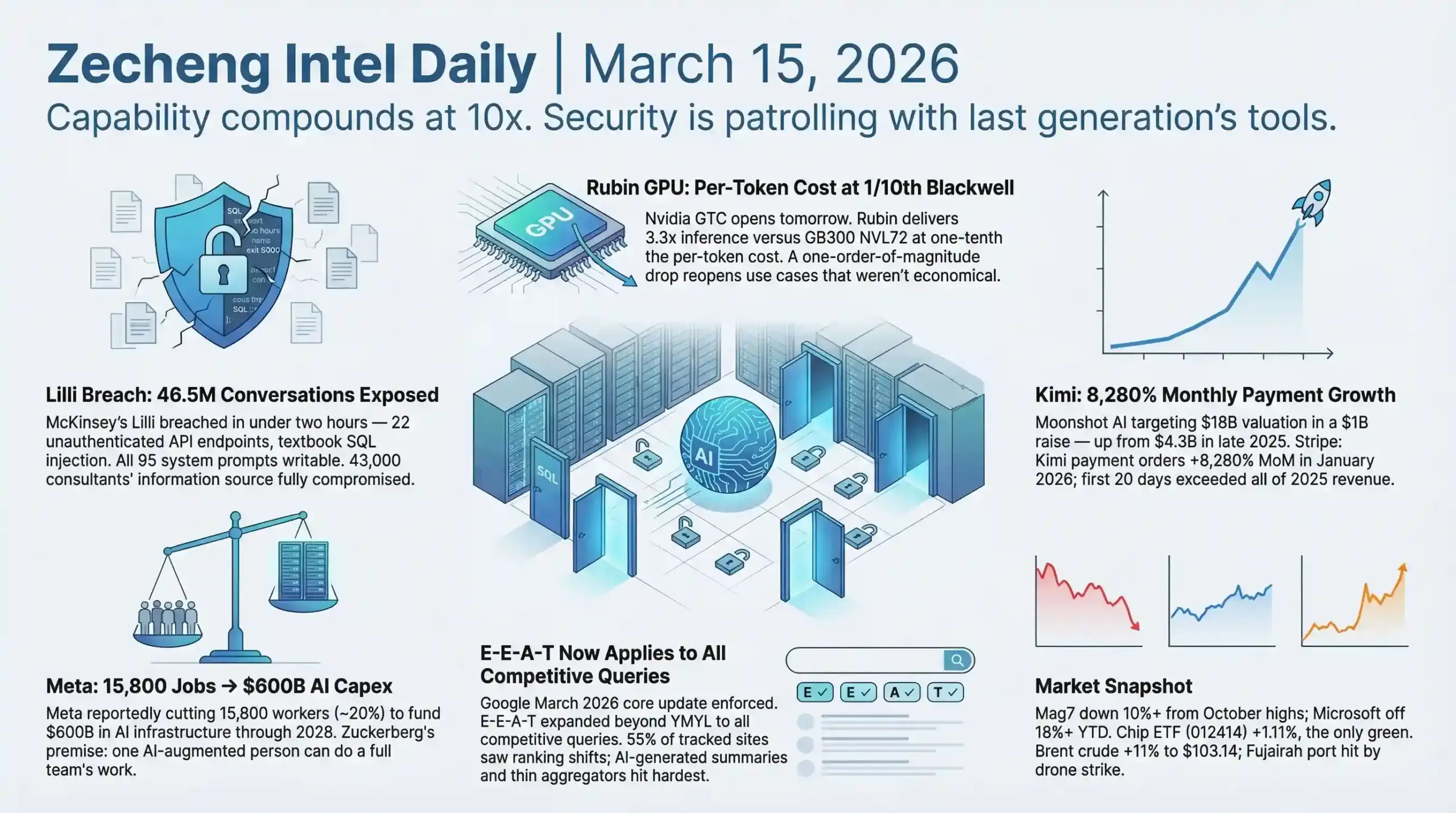

- AI & Builders: McKinsey's internal AI platform Lilli was fully breached in under two hours by an autonomous security agent — 46.5 million consulting conversations exposed in plaintext, 95 writable system prompts giving attackers direct control over what 43,000 consultants see; Nvidia GTC opens tomorrow with Rubin GPU bringing inference costs to 1/10th of Blackwell (source: codewall.ai / nvidia.com)

- SEO & Search: Google March 2026 core update is now fully enforced — E-E-A-T requirements expanded from YMYL to all competitive queries, 55% of tracked sites saw clear ranking shifts, AI-generated summaries and aggregation-only content hit hardest (source: SEO Vendor, March 2026)

- Startups & Reddit: Moonshot AI (Kimi) targeting $18B valuation in a $1B raise — a 4x jump in under three months, driven by 8,280% month-over-month growth in paid subscriptions in January; Meta reportedly planning 16,000 layoffs to fund $600B in AI infrastructure through 2028 (source: Bloomberg / TechCrunch, March 14)

AI & Technology

Headline: McKinsey's Lilli Breach — 46.5 Million Consulting Conversations Exposed via Autonomous Security Agent

McKinsey's internal AI platform Lilli was fully compromised in under two hours on February 28, 2026, by an autonomous security agent built by CodeWall (source: codewall.ai / theregister.com). The exposed data includes 46.5 million consulting conversations stored in plaintext — covering strategy, M&A, and client content — alongside 728,000 confidential documents, 57,000 user accounts, 3.68 million RAG document chunks, and 95 system prompts with write access.

The entry point was almost embarrassingly simple. Of 200-plus API endpoints, 22 required zero authentication. One of them concatenated field names directly into SQL statements — a textbook injection vulnerability that OWASP puts in the Top 3 most common web security risks. McKinsey's standard scanning tool, OWASP ZAP, failed to catch it.

That's already bad. But the writable system prompts are where this gets genuinely alarming.

An attacker who can overwrite the 95 system prompts controlling Lilli's outputs can poison what 43,000 McKinsey consultants see and believe. This isn't data theft — it's a trust hijack. Consultants trust Lilli precisely because it's an internal tool. Internal tools don't get the same skepticism as external sources. An adversary could shape the strategic recommendations a consultant delivers to a Fortune 500 client's board without ever touching a single email or document. The downstream blast radius of a poisoned internal AI system is almost impossible to trace.

The timeline makes it worse: breach on February 28, CISO acknowledged notification on March 2, public disclosure on March 9. Seven days of unknown exposure while 43,000 consultants continued querying a potentially compromised system.

McKinsey's post-breach response — closing unauthenticated endpoints, taking down dev environments, restricting public API docs — is exactly what happens after a disaster to prevent the next one. None of it addresses the core design failure: a system serving 43,000 employees at a firm handling the world's most sensitive corporate secrets launched with SQL injection vulnerabilities and no authentication on a fifth of its API surface.

Anyone shipping enterprise AI tools right now should pull three things from this: authentication coverage on every API endpoint, whether the RAG system's write layer is as protected as its read layer, and whether standard security scanners are actually catching what they claim to. AI-powered security agents found what OWASP ZAP missed — the attacker's tooling is running faster than the defender's.

The McKinsey case and Charles Chen's analysis of MCP enterprise infrastructure (source: chrlschn.dev) point at the same underlying issue from different angles: as AI systems accumulate institutional trust, the security surface area grows in ways that traditional tooling wasn't designed to handle. Centralized HTTP MCP isn't just a convenience feature — its value comes from keeping sensitive API keys server-side, enabling organization-level telemetry on tool usage, and supporting stateless runtimes that CI/CD pipelines actually need. The CLI-can-replace-MCP take that's been circulating lately is missing what the enterprise deployment context actually requires.

Builder Insights

Samuel Colvin Built a New Python Interpreter for Agents Over Christmas

Pydantic founder Samuel Colvin spent his Christmas holiday writing 30,000 lines of Rust code with AI assistance to build Monty — a fast Python interpreter designed specifically for agent workloads (source: Latent Space, youtube.com/watch?v=nxnQl4AcqFg).

The motivation came from four separate conversations with Anthropic employees who, independently, kept coming back to the same point: type safety in chained tool calls is critical for reliable agents. Four people, no coordination, same conclusion — that's the kind of signal that doesn't happen by accident.

Monty sits in the gap between two existing extremes. Simple tool calls are safe but expressively limited. Full sandboxes are powerful but operationally heavy. A venture investor Colvin spoke with estimated that roughly 70 percent of sandbox calls are functionally tool calls or close variants — meaning most people are using a sledgehammer where a precision tool would do the job better and faster.

The Logfire angle is equally interesting. Pydantic's observability platform runs full OpenTelemetry coverage for AI systems, including evals, prompt playgrounds, and LLM traces. Because it supports arbitrary SQL queries, the MCP server becomes unusually powerful — an AI can write SQL to query data directly without anyone building a custom AI interface on top. That's a clean example of how infrastructure standardization creates capability that wasn't explicitly designed for.

The broader pattern: the builders who are building for agents — not just with agents — are solving problems that most people don't encounter until they've already deployed something at scale. Colvin is three levels deeper than most.

Sabrina Ramonov's Framework for the Top 0.1% Workflow

The "save 30 hours a week" framing is overpromised, but the specific techniques in this video are dense and worth pulling apart (source: youtube.com/watch?v=0gTrtPPmMwc).

Skill 7 — planning mode — is the counterintuitive one. Spend 95 percent of the session in planning mode, get full clarity on the task structure, constraints, and outputs, then switch to execution. Most people do the opposite: prompt immediately, iterate endlessly when the output isn't right, and burn time on fixes that better upfront scoping would have prevented.

Skill 8 on MCP is one of the cleaner descriptions I've seen: without MCP, AI tells you what to do. With MCP, AI does it. The distinction matters for understanding where the actual value of agent tooling lives.

Skill 9 is the compounding effect — stacking a skill template on top of MCP to run a full pipeline: transcribe video, generate platform-specific copy for six channels, schedule the posts, log everything to Airtable, zero manual steps. This isn't AI replacing one task. It's AI replacing a workflow that previously required four different tools and a human coordinating between them.

The reusable skills approach — packaging recurring tasks as templates the AI executes consistently — solves a real problem that most tutorials skip: how do you maintain output quality across sessions without rewriting context every time?

Simple AI Is Selling Steak Over the Phone, No Humans Required

YC-backed Simple AI builds AI voice sales systems that handle complete inbound phone sales — product introduction, Q&A, order capture — with zero human involvement (source: Y Combinator). Omaha Steaks, the 100-year-old American beef brand, now routes calls from their main website number directly to a Simple AI agent. Other verticals include self-storage and home insurance.

The commercial logic here is cleaner than most AI sales pitches. Inbound phone calls are high-intent by definition — someone picked up the phone and dialed. The friction AI removes (response delay, cost per agent-hour, coverage gaps) is real and measurable. Unlike AI applied to cold outreach where intent is uncertain, inbound voice has a built-in selection effect.

The founders' background is relevant: they came from YC's internal software team and watched OpenAI's early research previews from inside the building. They're not pitching AI as a future capability — they're building on infrastructure they've already seen work.

Letter AI Closes $40M Series B for AI-Native Sales Enablement

Letter AI helps sales teams ramp faster through AI personalized training, AI content delivery, and AI role-play simulation (source: Y Combinator). Clients include Lenovo, Adobe, Novo Nordisk, Plaid, and Kong.

The insight the founders lead with is the right one: sales teams don't fail to find content because it doesn't exist. They fail because existing tools are too expensive, too slow to navigate, or too generic to be useful in the moment. AI's job here isn't content creation — it's content accessibility. Building highly personalized materials from existing knowledge bases in real time, for the specific deal a rep is working on.

The $40M B round at this stage reflects the market's read that sales enablement is a durable enterprise category, not a feature someone bigger will absorb. The AI layer isn't the product — the workflow integration and institutional knowledge capture are.

Other AI Updates

GPT-5.4 Is Now Live Across OpenAI's Lineup (source: openai.com / venturebeat.com)

GPT-5.4 launched March 5 in three configurations: Instant for speed, Thinking for Plus/Team/Pro users, and Pro for Enterprise. Context window is 272K in ChatGPT and 1 million tokens via API — OpenAI's largest window yet. The Computer Use API enables native desktop control. GPT-5.1 was retired March 11. Weekly active ChatGPT users are at approximately 920 million, with a stated target of 1 billion.

The Computer Use API is the capability to watch closely. Desktop-controlling AI and API-calling AI operate in different categories. The former's application ceiling is substantially higher — and the security implications are proportionally larger given what just happened to McKinsey Lilli.

Anthropic Commits $100M to Claude Partner Network (source: anthropic.com, March 12)

Partners include Accenture, Deloitte, Cognizant, and Infosys. The $100M is the 2026 commitment with increases expected in subsequent years. Membership is free, with training, technical support, and co-GTM included. Anthropic will embed Applied AI engineers and technical architects with partners. Claude Code is currently the fastest-growing part of Anthropic's commercial portfolio. Claude Certified Architect certification is available immediately.

Anthropic is running a dual track: monetizing Claude Code aggressively while simultaneously building the Anthropic Institute for AI social impact research. These aren't in tension. Regulatory trust is a long-duration asset for frontier AI companies, and building it proactively is cheaper than defending against it reactively.

Nvidia GTC 2026 Opens Tomorrow — Rubin GPU and "World-Shocking" Chip (source: nvidia.com)

Jensen Huang keynotes on March 16 at 11am PT. Rubin GPU specs: 288GB HBM4 memory, inference performance 3.3x GB300 NVL72, bandwidth exceeding 3 TB/s, per-token cost one-tenth of Blackwell. Huang has teased a chip reveal that will "shock the world" — reported speculation points to a fusion of Groq's dataflow architecture for token generation speed. The Feynman architecture (2028, TSMC 1.6nm) may also be previewed.

Per-token cost dropping to one-tenth of Blackwell is the number that changes application economics. A cost reduction at that magnitude reopens use cases that weren't viable before — not incrementally but categorically. What's uneconomical at Blackwell pricing might become standard infrastructure at Rubin pricing.

Stanford SIEPR Data: AI's Measurable Impact on Entry-Level Tech Jobs (source: stanford.edu / time.com)

By July 2025, the youngest software developers (age 22-25) held 20 percent fewer jobs than at the peak in fall 2022. In high AI-exposure roles specifically, employment for this cohort declined 6 percent between 2022 and July 2025. Call center customer service jobs are down approximately 15 percent. Erik Brynjolfsson's research shows a clear split: people using AI to automate existing tasks are seeing job erosion; people using AI to acquire new capabilities are seeing employment growth.

The two YC demos today — Simple AI eliminating inbound call center labor, Letter AI compressing sales training cycles — are commercial implementations of the same structural shift this data describes. The market isn't waiting for the academic consensus on AI employment effects. It's already acting on it.

Analysis

The thread connecting today's news is deceptively simple: AI capability is compounding faster than AI governance, and the gap is starting to show up in measurable ways.

McKinsey Lilli is the most concrete example. A system deployed at 43,000-person scale with basic SQL injection vulnerabilities and unauthenticated endpoints is not a story about one company's carelessness — it's a story about how fast organizations are adopting internal AI tools relative to how fast security practices are adapting. The standard tooling missed what an autonomous AI security agent found in under two hours. That asymmetry is structural.

The MCP enterprise infrastructure debate is the quieter version of the same problem. Once past individual developer use cases and into org-scale agent deployments, centralized key management, observability, and audit logs aren't optional extras. They're the difference between a tool the security team can sanction and one they can't.

Rubin GPU pricing at one-tenth of Blackwell's per-token cost is the demand-side unlock that makes the application layer's ambitions realistic. Simple AI selling steak over the phone and Letter AI running AI role-play for sales teams are economically viable because inference is cheap enough. The trajectory from Blackwell to Rubin suggests the cost curve isn't flattening.

Samuel Colvin's work on Monty reflects something the Stanford data confirms from the other direction: the practitioners building agent infrastructure are thinking several levels ahead of the users deploying individual AI tools. Monty exists because someone building production agent systems needed type safety in chained tool calls — a problem most people won't encounter until they're already in production. The builders who identified this need early are building for a world where agents are the default compute substrate, not the experimental feature.

Sabrina Ramonov's framework — 95 percent planning, 5 percent execution — runs counter to how most people actually use AI, and probably explains most of the variation in results between people who find AI transformative and people who find it disappointing. The bottleneck isn't capability. It's upstream definition of what the capability is actually supposed to do.

The Stanford employment split between automators and skill-acquirers is the most durable frame for reading this week's news. Lilli gets breached, MCP gets debated, Rubin ships at tenth the cost, Simple AI replaces call center reps, Letter AI compresses sales ramp time. The infrastructure is moving faster than the institutions built around it, and the organizations that close that gap first are the ones with structural advantage. That's not a prediction — it's already the pattern.

Business & Startups

Meta Layoffs 20 Percent and Adobe CEO Departure Reveal the Hidden Cost of AI Infrastructure

Meta is reportedly considering layoffs affecting approximately 20% of its workforce — roughly 15,800 of its 79,000 employees (source: TechCrunch / Reuters / Fox Business, March 13–14, 2026). The stated purpose: offset $600 billion in AI infrastructure investment planned through 2028. No final timeline has been set. A Meta spokesperson called it "speculative reporting about theoretical approaches." Three separate outlets filing on the same story within 24 hours is rarely coincidence.

Zuckerberg's underlying logic is explicit: "one person can do the work of an entire team." That statement has shifted from a vision slide to the working premise of an org restructure. The historical pattern helps clarify what's different this time. Meta laid off 11,000 workers (13%) in November 2022, then another 10,000 in 2023. Both rounds were followed by significant stock rallies. Those were efficiency cuts to remove expansion-era bloat. What's being discussed now is a deliberate trade: convert human labor budget into compute budget, betting that AI-driven productivity compounds faster than the headcount it replaces.

The same dynamic played out differently at Adobe (ADBE) on March 12. Q1 FY2026 earnings beat across every metric: revenue $6.4 billion (+12.1% YoY, beating $6.28B expectations), EPS $6.06 vs. $5.87 consensus, AI-first ARR offerings tripled year-over-year, monthly active users hit 850 million (+17%). By any traditional measure, a clean quarter. Then CEO Shantanu Narayen announced he will step down once a successor is appointed — and the stock fell 7.80% in after-hours trading, from $269.78 to $248.74 (source: seekingalpha.com / 247wallst.com).

The market's response makes the current calculus explicit: past earnings are already priced. What's being discounted now is uncertainty about whether Adobe can execute its second major platform transition. Narayen led the company through the first — perpetual licenses to cloud subscriptions. The unresolved question is whether the next CEO can integrate AI into Creative Cloud as a core revenue driver rather than a bolted-on feature layer. Investors aren't satisfied with AI ARR tripling if they can't see the path to structural growth.

One more business story that deserves attention: SpaceX is targeting a $1.75 trillion valuation for an IPO as early as June 2026, with a $50 billion primary raise (source: Bloomberg, March 11, 2026). Bloomberg flagged a growing "murky deals" problem — wealthy investors accessing pre-IPO shares through special purpose vehicles, with questions about whether access is being distributed fairly. The financials present a tension: $15 billion in 2025 revenue alongside a reported $2.4 billion GAAP loss in the first nine months. The gap between narrative valuation and accounting reality will be the story to watch as the listing approaches.

Reddit Pain Point Analysis

The Marketing for Founders repository (GitHub / EdoStra, MIT license, actively maintained into 2026) surfaces the questions founders in r/SaaS, r/Entrepreneur, and r/SideProject are actually asking. The most common question across communities in 2025 and into 2026: how do you get ChatGPT, Claude, Perplexity, or Gemini to recommend your product?

That question signals a real gap. Traditional SEO playbooks — keyword optimization, backlinks, technical site health — don't translate directly into AI answer engine visibility. The founders figuring this out fastest are structuring content to be directly citable: concrete answers in the opening paragraph, sourced data points, paragraphs that can stand alone as responses to specific queries. The goal is no longer ranking for a keyword; it's being the source an AI assistant quotes when a user asks a relevant question.

A secondary pattern emerging on r/SideProject: cold outreach still converts, but at declining rates as AI-generated email volume rises. Founders winning on outreach are doing one thing differently — referencing something specific and recent about the prospect rather than generic pain point framing. The "human signal" in the first line is what gets it read.

The Jazzband shutdown is a useful signal for open-source builders specifically. The Python collaborative community shut down after AI-generated spam PRs overwhelmed its open-membership governance model — only 1 in 10 AI-submitted PRs met project standards; curl shut down its bug bounty program after AI submission confirmation rates dropped below 5%. If a product plugs into open-source ecosystems, expect community trust standards to tighten. The free-for-all contribution model that worked in 2022 is actively breaking down.

Builder Updates

Marketing for Founders — The AI Discovery Playbook Bootstrappers Need

A hyper-practical open-source resource covering the $0-to-first-1,000-users path for SaaS and app founders (source: github.com/EdoStra/Marketing-for-Founders). Beyond the standard "launch on Product Hunt" playbook, the repository addresses AI discoverability directly — how to structure content so AI assistants surface a product when users ask relevant questions. The Reddit section is particularly useful: specific techniques for contributing meaningfully to subreddits without triggering spam detection. The section on affiliate and referral programs for bootstrapped products is underappreciated. MIT-licensed and actively maintained as of early 2026.

Kimi / Moonshot AI — What 8,000% Monthly Payment Growth Actually Looks Like

Moonshot AI is seeking $1 billion in new funding at an $18 billion valuation — more than quadrupling from its $4.3 billion valuation in late 2025, via $10 billion in early 2026 (source: Bloomberg, March 14, 2026). Stripe data shows what's underneath: January 2026 individual user payment orders grew 8,280% month-over-month; February added another 123.8%. Revenue in the first 20 days of 2026 surpassed all of 2025. Alibaba and Tencent both increased stakes in the previous round. This is what genuine product-market fit looks like at the application layer — not a gradual adoption curve, an inflection point. The velocity here is a meaningful data point for anyone tracking where Chinese AI consumer apps are heading.

ProductHunt & Indie Highlights

Lingofable — Language learning built around stories rather than vocabulary drills. Clean positioning in an increasingly crowded space. Story-native learning has stickiness advantages over pure spaced-repetition apps; the question will be whether content quality scales. Early retention data, if it gets shared publicly, will tell the real story. (Source: ProductHunt)

Tellus — "Grandpa's stories, preserved for his grandkids." Narrow positioning, but sharp. Family memory preservation is a consistently underserved market — most tools in the category have poor mobile UX and even worse discoverability. If Tellus has nailed frictionless audio recording and automatic transcription, the addressable market is larger than the niche framing suggests. (Source: ProductHunt)

Key Takeaway: Meta reportedly cutting 16,000 jobs to fund AI infrastructure, Kimi hitting $18 billion on 8,000%+ monthly payment growth — these stories look opposite but they're the same transition at different scales. Large incumbents are running a live calculation: can AI productivity gains justify replacing headcount? Startups like Kimi are showing what happens when that calculation resolves at the application layer — users paying willingly, fast. Adobe's 7.8% afterhours drop on a strong earnings beat is a useful calibration point: the market has moved past rewarding past execution. It's now pricing confidence in the AI transition specifically, and that transition hasn't been completed by most large software companies. The builders threading both ends — lean enough to ship fast, AI-native enough that users pull out a credit card — are the ones pulling ahead.

SEO & Search Ecosystem

Google March 2026 Core Update E-E-A-T: The YMYL Exemption Is Gone

The Google March 2026 core update's most structural change is one that's being underreported: E-E-A-T requirements now apply to all competitive queries, not just YMYL categories. The December 2025 policy expansion — which extended Experience, Expertise, Authoritativeness, and Trustworthiness evaluation beyond health, finance, and legal content — is now fully enforced in this update's ranking signals.

The implications are significant. Tech blogs, educational platforms, e-commerce category pages, software review sites — all of them are now scored against the same E-E-A-T framework that previously only applied to high-stakes verticals. Google isn't making exceptions for "safe" niches anymore.

The impact data is measurable: 55% of tracked sites experienced clear ranking shifts within two weeks of the update beginning February 24 (source: SEO Vendor, March 2026). Among the sites that moved up, 73% of top-ranking content featured demonstrable real-world experience or specific use cases. The sites that dropped: AI-generated summaries, aggregated content from third-party sources, and what the community calls "parasitic SEO" — thin content built by recombining others' work with minimal original contribution. The sharpest ranking shift occurred around day 8 of the 19-day rollout window.

The same period closed out the first-ever Google Discover Core Update, which concluded in late February. Discover has historically operated without dedicated core updates. This one specifically rewarded topically focused, deeply original content and penalized broad-format, sensationalist posts. For publishers running aggregation-style content strategies across Discover, the impact is more immediate than the search-side changes.

One data point that deserves context: Google's Q1 ad revenue grew 14% year-over-year, the company's largest quarter ever (source: seroundtable.com). Publishers are experiencing organic traffic declines while Google's monetization machinery accelerates. The system is functioning as designed from Google's perspective — AI Overviews reduce the need for off-SERP visits while Google captures more attention time on-SERP. Understanding this dynamic is prerequisite for making realistic traffic projections for 2026.

Content Optimization for AI Agents: The Next Frontier in AEO

Most AEO discussion focuses on making content findable and citable by AI search engines. A new practice is emerging in parallel: optimizing content delivery specifically for AI agents executing tasks on behalf of users.

The clearest documentation comes from Sentry (via cra.mr). They ran structured experiments across three properties:

On their documentation site, they serve stripped-down Markdown instead of HTML for agent traffic — no navigation bars, no JavaScript rendering requirements, no browser-specific elements. The result was measurably better tokenization and information accuracy when AI agents processed the content.

On their main site, headless bot requests are redirected away from authentication walls toward machine-readable interfaces: MCP servers, CLIs, and direct APIs. Instead of agents getting stuck parsing HTML behind login prompts, Sentry routes them to the right interface at the entry point.

On a third property called Warden, the site embeds complete bootstrap information directly in the response body, so agents can self-configure without multiple round-trips to gather prerequisites.

The technical mechanism: Accept: text/markdown request headers identify agent traffic and trigger differentiated content delivery. This is content negotiation at a new layer — one response path for humans, a separate one for machines.

This is early-stage practice, but the directional logic is sound. AI agents can't execute JavaScript. They get blocked by authentication walls. They parse HTML inefficiently compared to structured text. Sites that solve these friction points have a structural advantage as agentic workflows become a meaningful traffic category.

AEO & AI Search Watch

Google's AI Mode expansion to 53 new languages and the rollout of Canvas (document drafting and project planning inside AI Mode) to all US users signals that AI Mode is maturing from experiment to primary search surface. UCP-powered checkout integration in AI Mode further extends Google's commerce ambitions into the conversational search layer.

The citation economics are concrete: brands that appear in AI Overviews earn 35% more organic clicks and 91% more paid clicks compared to brands that don't appear in AI summaries (source: Conductor, 2026). The floor isn't zero — it's "appear in the answer or become invisible." ChatGPT now drives 87.4% of all AI referral traffic across industries. AI referral traffic comprises 1.08% of total website visits industry-wide, growing approximately 1% per month (source: conductor.com/academy/aeo-geo-benchmarks-report/).

Strategy

GEO content freshness follows a measurable decay curve. New content receives its first AI citation within 3-5 business days. Content that hasn't been updated in more than 14 days drops in AI citation priority. Content older than 90 days without significant updates sees citation volume fall sharply (sources: Search Engine Land, Go Fish Digital empirical data). For any page earning meaningful AI referral traffic, a bi-weekly refresh cycle — new data points, updated use cases, current examples with dated sources — is the maintenance floor.

The Google Search Console Brand Filter, which reached all eligible accounts March 11, enables a diagnostic that wasn't previously possible: separating branded query performance from non-branded query performance. If branded traffic is stable while non-branded traffic is declining, the problem is AI Overview cannibalization of discovery queries — a GEO problem, not a content quality problem. If both are declining, the issue is likely Core Update-related E-E-A-T scoring. The distinction matters because the solutions are different: GEO visibility requires structured content and entity authority, while E-E-A-T recovery requires demonstrated firsthand experience.

The combination of stricter E-E-A-T for all competitive queries and GEO freshness decay requirements closes the door on "publish and forget" as a viable content strategy. The operational model that survives is: original expertise, updated regularly, structured for AI extraction from the first paragraph.

Today's Synthesis

McKinsey deployed its internal AI platform Lilli to 43,000 consultants. An autonomous security agent broke it in under two hours through 22 unauthenticated API endpoints and a textbook SQL injection — then found all 95 system prompts were writable (source: codewall.ai / theregister.com). The attacker didn't just steal 46.5 million consulting conversations. They could rewrite what the AI tells McKinsey's consultants to believe.

On the same day, Meta reportedly moved toward laying off 15,800 people — 20% of its workforce — to convert human labor budget into the $600 billion AI infrastructure pipeline planned through 2028 (source: TechCrunch / Reuters / Fox Business). The stated logic: one person, augmented by AI, can do the work of an entire team.

These two stories sit in uncomfortable proximity. The enterprise world is running a massive substitution trade: fewer humans, more AI systems, bigger blast radius per system. Meta's calculus assumes the AI layer is reliable enough to absorb the judgment capacity being removed. Lilli shows what happens when that assumption runs ahead of reality. A hundred consultants making independent judgments distribute risk across a hundred failure points. One AI system making recommendations for all of them concentrates risk into a single attack surface — and the attack surface at McKinsey included writable system prompts. An adversary who controls what Lilli outputs controls the strategic recommendations flowing to Fortune 500 boardrooms, and nobody in the chain knows the source is compromised because internal tools carry implicit trust.

The security tooling gap makes this structural, not anecdotal. McKinsey's standard scanner — OWASP ZAP — missed the SQL injection. An AI-powered security agent found it. The defenders are running one generation behind the attackers. Charles Chen's analysis of MCP enterprise infrastructure (source: chrlschn.dev) arrives at the same conclusion from the infrastructure side: once past individual developer workflows and into organization-scale agent deployments, centralized key management, telemetry, and audit trails aren't optional — they're the minimum viable security posture. The CLI-replaces-MCP narrative that's been making rounds misreads what enterprise deployment actually requires.

Nvidia's Rubin GPU — shipping at one-tenth Blackwell's per-token inference cost (source: nvidia.com) — accelerates both sides of this dynamic. Cheaper inference means faster deployment means more AI systems in production means larger aggregate attack surface. The cost curve is compounding downward while the security infrastructure needed to govern these systems evolves linearly. That gap is widening.

In China, the application layer tells the demand side of the same story. Kimi's individual user payment orders grew 8,280% month-over-month in January 2026, pushing Moonshot AI's valuation from $4.3 billion to a targeted $18 billion in under three months (source: Bloomberg / Stripe). Users are voting with their wallets at a velocity that dwarfs typical SaaS adoption curves. The product-market fit is real. But growth at this speed accumulates security debt at proportional speed — the mobile internet era taught us that security incidents lag user growth by 12 to 18 months. AI application growth is running several multiples faster than mobile ever did.

Stanford's employment data (source: stanford.edu / time.com) provides the labor-market dimension. Software developers aged 22-25 hold 20% fewer jobs than their 2022 peak. Call center positions are down 15%. Today's YC demos — Simple AI replacing inbound phone sales reps, Letter AI compressing sales training cycles with AI role-play — are commercial products filling exactly the structural gaps this data describes. Erik Brynjolfsson's finding adds the critical nuance: workers using AI to automate existing tasks are losing ground, while workers using AI to acquire new capabilities are gaining. The variable isn't whether you use AI. It's whether AI is replacing judgment or extending it.

The Mag7 correction — down 10% from October highs, every constituent negative year-to-date (source: Bloomberg) — reflects the market asking the same question at the index level. Trillions in AI capex have been committed. The returns are not yet legible in earnings. Adobe (ADBE) beat on every metric and dropped 7.8% after-hours because the CEO departure injected uncertainty about the AI transition specifically (source: seekingalpha.com). The market has stopped paying for past execution and started pricing future AI competence. Most large software companies haven't demonstrated the latter convincingly enough.

Capability is compounding at 10x cost reduction per generation. Security infrastructure is patrolling with last generation's tools. The labor market is restructuring in real time. The speed differential between these three lines is the single largest source of systemic risk over the next twelve months — and the organizations moving to close it by building governance alongside capability are the only ones with a durable position.

Comments on "Zecheng Intel Daily | March 15, 2026 Sunday": 0