Quick Overview

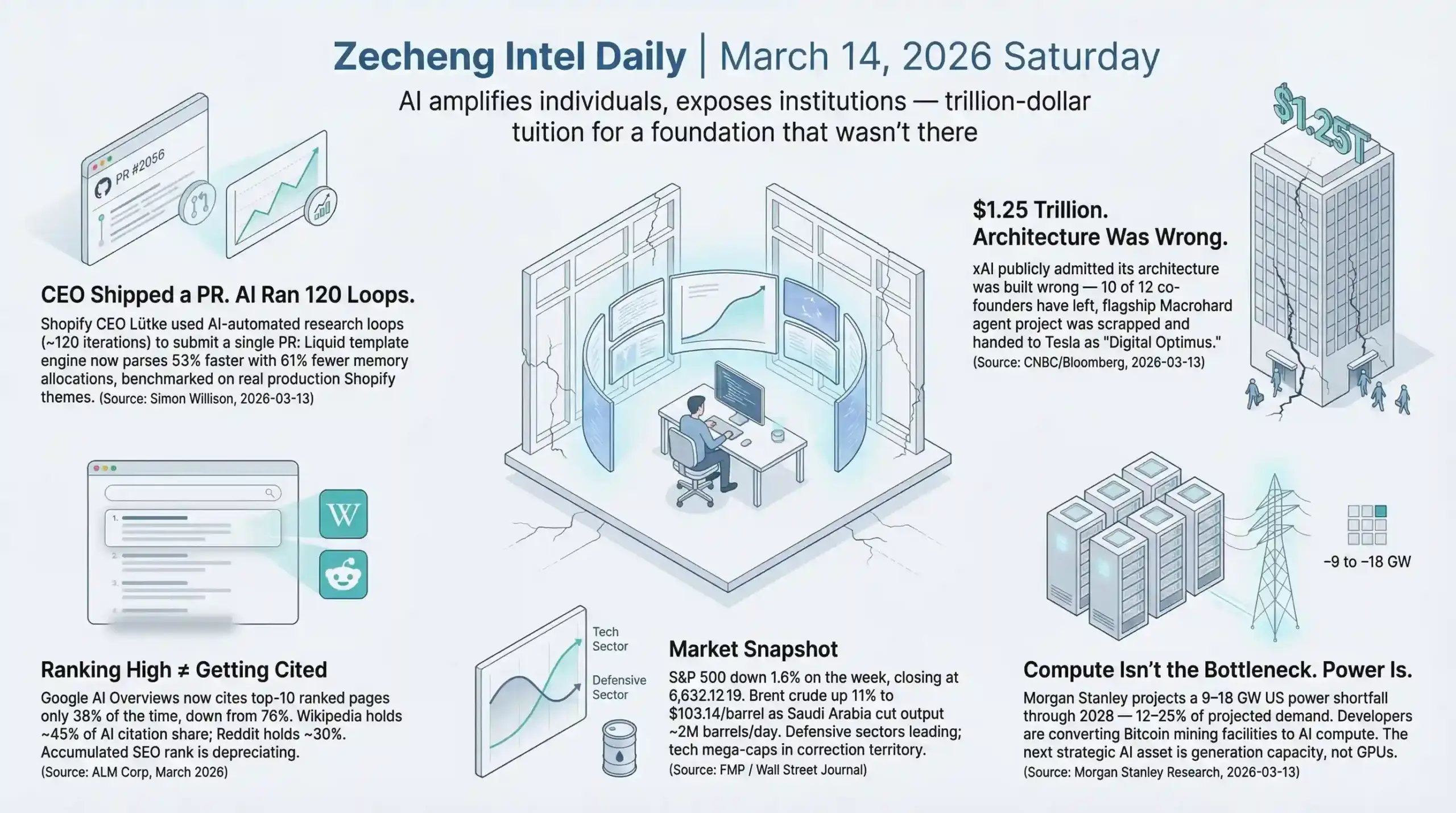

- AI & Builders: xAI publicly admitted its architecture is broken — 10 of 12 co-founders have left; Nvidia GTC (March 16) pivots to inference efficiency and agent orchestration, signaling the AI competition has shifted from "biggest model" to "most reliable system"

- SEO & Search: Google AI Overviews now cites Top-10 ranked pages only 38% of the time, down from 76% — Wikipedia (45%) and Reddit (30%) dominate AI citation sources; ranking high no longer means getting cited

- Startups & Reddit: Adobe settles with the DOJ for $150M, drawing a hard legal line around subscription dark patterns; Shopify's CEO personally submitted a GitHub PR using AI-assisted tooling that cut Liquid template parsing time by 53% — the productivity baseline for engineers is being redefined

AI & Technology

Headline: Musk Admits xAI "Wasn't Built Right" — 10 of 12 Co-Founders Gone

xAI is being torn down and rebuilt from scratch. That's not speculation — Elon Musk said it publicly (source: CNBC/Bloomberg, March 13, 2026).

The timeline makes this more striking. Six weeks ago, SpaceX and xAI merged at a $1.25 trillion valuation, and the announcement was full of ambition. Then the exits started — and kept going. Of the 12 co-founders who started xAI in 2023, only two remain: Manuel Kroiss and Ross Nordeen. The departures include Guodong Zhang (led Grok Code and Imagine projects), Toby Pohlen (led the Macrohard AI agent project — lasted 16 days), Zihang Dai, Jimmy Ba, Tony Wu, Igor Babuschkin, Kyle Kosic, Christian Szegedy, and Greg Yang. That's essentially xAI's entire technical core walking out the door.

Here's the detail that matters most: Macrohard — xAI's flagship AI agent project — is now dead. Tesla absorbed it and rebranded it "Digital Optimus," with a completely different technical approach. xAI's version worked with static screenshots. Tesla's version uses real-time video. The flagship product's core architecture was scrapped along with the team that built it.

Musk is now reaching back out to candidates who previously declined, and hiring from Cursor AI's coding startup. That hiring signal is deliberate — Cursor's specialty is code agents, which aligns precisely with whatever xAI is rebuilding toward.

The $1.25 trillion valuation came before the admission that the architecture was wrong. That sequence matters. The merger was a capital move, not a technical milestone. And the researchers who joined xAI drawn by that valuation and momentum are now looking at a construction site instead of a high-speed train. Elite talent doesn't stay for reconstruction projects unless the upside is crystal clear — and right now it isn't.

The broader signal for the AI industry: capital can buy compute and build hype, but it can't shortcut the work of building systems that actually hold together. This will be a textbook case — a trillion-dollar AI company publicly admitting its architecture was wrong within 18 months of launch, with nearly its entire founding team gone.

This lands in the same week that Nvidia GTC 2026 is ramping up. Jensen Huang's keynote is set for March 16 at SAP Center (source: Fortune/Nvidia Newsroom, March 12, 2026), with the stated focus shifting from massive model training toward inference acceleration, agent orchestration, and networking. The Groq founding team — including CEO Jonathan Ross and President Sunny Madra — is joining Nvidia to advance licensed inference technology. Two events, same underlying signal: the AI competition is moving from "whose model has the most parameters" to "whose inference is fastest and whose agents are most reliable." That's a race xAI hasn't even entered yet, because it's still fixing the foundation.

Builder Insights

Perplexity Personal Computer: $200/month for an AI That Doesn't Clock Out

Perplexity's first Ask 2026 developer conference produced two genuinely interesting products: Personal Computer for individuals and Enterprise Computer Agent for organizations (source: Axios/VentureBeat, March 11, 2026).

Personal Computer is simple to describe and hard to dismiss: software that runs continuously on a Mac mini, giving a cloud-based AI agent persistent local access to your files, apps, and sessions. It monitors triggers, executes proactive tasks, and runs 24/7 without needing you to initiate anything. Available to Max tier subscribers at $200/month.

The enterprise version integrates directly into Slack — employees query @computer, and conversations continue across web and mobile. New developer APIs cover Search, Agent, Embeddings, and Sandbox. Perplexity Finance now connects to 40+ live financial tools including SEC filings, FactSet, S&P Global, and Coinbase.

What Perplexity is testing here is whether people will pay for persistent AI execution rather than on-demand AI querying. The capability shift is from "ask and receive" to "delegate and monitor." The $200/month price point is real money. If Max subscribers renew at high rates, this pricing model will spread fast across the agent tools space.

The architecture choice is worth noting on its own terms. Tools built around the assumption that a human is always present to initiate tasks will feel increasingly limited next to systems that run autonomously across sessions. The Mac mini as persistent local server sidesteps cloud latency and privacy concerns while keeping the agent grounded in a local environment — a deliberate design decision, not a hardware limitation.

Sora 2: The Video Quality Improved. The Bigger Move Is ChatGPT Distribution

OpenAI released Sora 2 with improved physical accuracy, realism, and control (source: OpenAI/PCWorld, March 12, 2026). The demos are legitimately impressive — Olympic gymnastics, complex water physics simulations, synchronized dialogue with sound effects. Prior systems struggled with exactly these challenges.

But the more consequential announcement is the planned ChatGPT integration. OpenAI is folding Sora's core capabilities directly into the ChatGPT interface. Hundreds of millions of users will be able to generate video from a chat prompt without switching tools. Sora will keep its standalone app, but the distribution advantage is in ChatGPT.

Google Veo, Runway, Pika, and Kling are all capable. None of them have a ChatGPT-scale entry point. This is increasingly how AI tool competition works: the technical gaps between leading products are shrinking, and distribution advantages are widening. The winner isn't necessarily who builds the best model — it's who owns the interface people already use daily.

Other AI Updates

Morgan Stanley: Major AI Breakthrough Coming in H1 2026 — But the Power Grid Won't Keep Up

Morgan Stanley published a research note flagging an imminent AI leap driven by unprecedented compute accumulation at leading labs (source: Fortune/Morgan Stanley Research, March 13, 2026). The core finding: applying 10x compute to LLM training effectively doubles model capability. OpenAI's GPT-5.4 Thinking already hit 83% on the GDPVal expert benchmark.

The warning is equally direct. Morgan Stanley's Intelligence Factory model projects a net US power shortfall of 9-18 gigawatts through 2028 — a 12-25% deficit. Developers are already converting Bitcoin mining operations into high-performance computing centers and deploying fuel cells to stay ahead of grid limitations.

Here's the thing: compute is no longer the bottleneck. Power is. The next strategic asset in AI infrastructure isn't GPUs — it's generation capacity and transmission infrastructure. The companies that secured long-term power contracts in 2024 and 2025 have a structural advantage that's hard to replicate quickly. This also reframes the strategic value of energy companies in ways that haven't fully priced in yet.

OpenClaw's Crypto Experiment Blows Up — "Uninstall OpenClaw" Trending at $43.55 a Pop

OpenClaw, the open-source AI agent framework that accumulated 260,000+ GitHub stars in four months, generated an unusual kind of viral moment in mid-March 2026: users on Alibaba's Xianyu secondhand marketplace listing removal services for $43.55 (source: BeInCrypto/KuCoin/Odaily, March 13, 2026).

The story: Chinese users plugged OpenClaw into exchange APIs and let it trade crypto autonomously. Most experiments ended in losses or security breaches. The ClawHavoc supply-chain attack from late 2025 had already compromised 1,184 malicious skills in the OpenClaw skill library — 17% of third-party skills involved cryptocurrency theft. Bitdefender Labs has issued a high-risk warning.

This matters beyond the crypto losses. It's a stress test of the entire premise behind open-source agent frameworks: that giving an AI system access to financial APIs is a reasonable thing to do in 2026 without serious security infrastructure in place. It isn't. The gap between "technically possible" and "safe to deploy" in agent systems is wider than the builder community has been treating it.

Crypto.com introduced an "Agent Key" mechanism for controlled API permissions in response. That's the right instinct. But the sequence — widespread deployment first, security architecture second — is exactly backwards for systems that can autonomously move money.

Meta Acquires Moltbook: An AI Agent Social Network

Meta confirmed the acquisition of Moltbook on March 10 (source: TechCrunch/Bloomberg/CNBC, March 10, 2026) — a Reddit-like platform where AI agents using OpenClaw could post, comment, upvote, and downvote while human creators watched. Founders Matt Schlicht and Ben Parr are joining Meta Labs, starting March 16.

Meta's stated interest is in Moltbook's "always-on agent directory" — infrastructure for connecting agents in a multi-agent environment. Given that OpenClaw is simultaneously blowing up in crypto circles and getting acquired into Meta's stack, there's a tension worth watching: the same agent framework producing security breaches in financial applications is becoming a building block for Meta's multi-agent ambitions.

Microsoft Copilot Health Centralizes Medical Records — With "Not a Diagnostic Tool" Disclaimer

Microsoft launched Copilot Health on March 12 (source: Microsoft AI Blog, March 12, 2026) — a secured space within Copilot that aggregates health records via HealthEx (connecting 50,000+ US hospitals and providers), wearables including Apple Watch (AAPL), Oura, and Fitbit, medications, doctor notes, and test results. The product explicitly states it is not intended to diagnose, treat, or prevent disease. Data is isolated from general Copilot, not used for model training, and deletable by users. English-only, 18+, phased rollout via waitlist.

The technical architecture here is defensive — every design choice reduces regulatory exposure. "We aggregate but don't diagnose" is Microsoft (MSFT) testing how far it can operate in healthcare data before running into regulatory resistance. How the FDA and HHS respond to Copilot Health will set a precedent for what AI companies can do in this space.

Analysis

The stories from March 13 share a common thread: AI is transitioning from a rapid expansion phase into a foundation-rebuilding phase, and the cracks are visible in multiple directions simultaneously.

xAI is the most obvious case — a company that raised at trillion-dollar valuations, ran fast, and is now publicly acknowledging the foundations weren't solid. This isn't an isolated failure. The same dynamic exists at varying degrees across companies that prioritized speed-to-market over architectural soundness. The difference is that most haven't been forced to say it out loud yet.

The power shortage projection adds a physical constraint to what's been an almost purely computational discussion. Morgan Stanley isn't wrong that AI capabilities will keep advancing — GPT-5.4 Thinking at 83% on expert benchmarks is real progress. But if the electrical grid can't keep up, the race to deploy AI at scale hits a wall that no amount of fundraising can immediately solve.

OpenClaw's security problems expose the trust deficit in the agent space. Before agent frameworks can be genuinely useful for high-stakes tasks — finance, healthcare, legal — the security architecture needs to match the ambition. Right now it doesn't. That gap is where the next generation of infrastructure tools will be built. Companies solving agent security, permission management, and supply-chain integrity for AI systems will have a clear market in 2026 and beyond.

The opportunity isn't in building the most exciting AI-native product. It's in building the most reliable one. In a phase where the headline story is a trillion-dollar company admitting its architecture failed and an open-source framework getting exploited for crypto theft, reliability has become the scarce resource.

Business & Startups

Adobe's $150 Million Settlement Draws a Hard Line on Subscription Dark Patterns

Adobe agreed on March 13 to pay $150 million to settle a US Department of Justice lawsuit (DOJ press release, 2026-03-13): $75 million in civil penalties plus $75 million in consumer credits. The charge: Adobe's "annual paid monthly" Creative Cloud plans buried early termination fees — sometimes reaching hundreds of dollars — inside fine-print links, while routing cancellation requests through a deliberately confusing multi-step process designed to exhaust users into giving up.

The settlement terms carry more weight than the dollar amount. Adobe must now disclose any early termination fee upfront and explain how it's calculated; must remind customers before a free trial converts to paid (for trials longer than seven days); and must provide a simple, direct cancellation path. Adobe doesn't admit wrongdoing, but it's writing a nine-figure check.

The case ran from June 2024 through March 2026 — nearly two years. That's the DOJ signaling it will see subscription abuse cases through to the end. For bootstrapped SaaS founders and e-commerce subscription operators: annual billing with complex cancellation flows is no longer a gray area. It's a documented liability.

Digg Collapsed in Two Months — AI Bots Overwhelmed Every Standard Defense

Digg shut down its app and laid off significant staff on March 13, exactly two months after opening its public beta on January 14 (TechCrunch, 2026-03-13). CEO Justin Mezzell's memo was unusually candid: within hours of launch, SEO spammers discovered that the relaunched Digg still carried Google domain authority. AI agents and automated accounts flooded in. The team deployed internal tools and industry-standard external defenses. None of it was enough.

His words: "When you cannot trust that the votes, comments, and interactions you see are real, you've lost the foundation of a community platform."

Kevin Rose — who co-founded Digg over 20 years ago and bought it back with Reddit co-founder Alexis Ohanian last year — says he'll return full-time from the first week of April to rebuild with a smaller team.

The takeaway isn't about Digg specifically. It's a verifiable data point: a platform with real Google authority, built by experienced founders with standard anti-spam infrastructure, got overwhelmed by AI bots in sixty days. For anyone building community tools or UGC platforms in 2026, "AI spam is a future problem" is no longer a valid framing.

Reddit Pain Point Analysis

Across r/Entrepreneur, r/SaaS, and r/startups this week, three threads keep surfacing.

Cold outreach is entering a trust crisis. Cold email benchmarks for 2026 show typical response rates of 1-5% for most campaigns (Sopro research, 2026), and a conversion rate above 2% is considered solid. But the discussion isn't whether the numbers work — it's about the signal-to-noise ratio collapsing as AI-generated outreach saturates every channel. The emerging consensus among founders who are still getting responses: go manual for new segments, prioritize depth over volume, focus exclusively on prospects who've been active online in the last 30 days.

Shipping threshold optimization is getting fresh attention. @ShipScout flagged it directly: with inflation squeezing margins, testing free shipping thresholds is one of the fastest practical levers for e-commerce operators, especially on lower AOV products. The logic is simple — the threshold set when margins looked different probably needs revisiting.

The platform trust question is getting personal. After Digg's collapse, developers building community tools are asking: what's the actual minimum viable anti-bot infrastructure in 2026? The thread consensus is uncomfortable — there may not be a solution that's both affordable and robust, which means platform trust is increasingly a structural problem rather than an engineering one.

Builder Updates

Shopify's CEO Shipped a Performance PR Using AI-Assisted Code Research (Simon Willison, 2026-03-13)

Tobias Lütke submitted GitHub PR #2056 against Liquid, Shopify's open-source Ruby template engine — and got 53% faster parse+render speed with 61% fewer memory allocations, benchmarked against real Shopify themes in production. The method: running approximately 120 automated experiment loops using a variant of Andrej Karpathy's autoresearch system (edit → commit → test → benchmark → keep or discard → repeat). The core insight was allocation-driven profiling: find where the code creates objects unnecessarily, eliminate them, defer the rest. One concrete change: replacing a StringScanner tokenizer with String#byteindex.

Liquid powers every Shopify storefront. A CEO at a company with $100B+ market cap using AI-assisted automated research to write open-source performance patches is not a curiosity — it's a data point about where the engineering productivity floor is in 2026. The gap between what one person can produce with AI tooling versus without it is now visible in commit histories.

NanoClaw + Docker: Six Weeks from Hacker News to Enterprise Partnership (TechCrunch, 2026-03-13)

Gavriel Cohen posted NanoClaw on Hacker News six weeks ago after building it over a weekend. It accumulated 22,000 GitHub stars, 4,600 forks, and 50+ contributors. On March 13, Docker announced a partnership: NanoClaw AI agents will run inside Docker Sandboxes — disposable MicroVM containers providing OS-level isolation. Cohen shut down his AI marketing startup to go full-time on NanoClaw through a new company called NanoCo. Docker has 80,000 enterprise customers.

The interesting structural point: this wasn't a traditional fundraise-build-sell path. Open-source traction pulled the enterprise deal, because Docker needed the use case. Enterprise security requirements for AI agent isolation are creating pull, not just push. That's a different kind of go-to-market than most founders are used to building for.

Davie Fogarty on Revenue Transparency and Shark Tank Valuation Dynamics (YouTube / Twitter @daviefogarty)

Fogarty's store hit a total revenue figure of $885,349.45 — published publicly, as he's done consistently. He also broke down a Shark Tank pitch worth studying: a clothing company (t-shirts, socks, underwear) with a 25% average COGS, showing a revenue trajectory of $800K, $900K, and $1M over the prior three months. They walked in asking for $500K at 2.5% equity — implying a $20M valuation. Every shark offered $500K for 10% (some with step-down clauses to 5% if repaid within two years). Nobody touched 2.5%.

The founder anchored high and lost the room. The revenue trend was strong. The negotiation framing killed the deal. The ratio between growth proof and valuation ask is the thing — sharks don't care how fast a company is growing if the ask doesn't leave room for their risk math. Honestly, I've seen the same pattern in smaller conversations: strong numbers, wrong framing, confused silence.

ProductHunt & Indie Highlights

Scindo: AI that captures decisions, drafts plans, and opens matching pull requests automatically. Targets the gap between product decisions and engineering execution — which is real for any team where "what we agreed to" and "what got built" frequently diverge.

GradPipe: Finds engineers who never apply by scanning actual GitHub code quality. Hiring-from-evidence rather than hiring-from-resumes positions directly against the resume-filter problem, which is getting worse as AI-generated CVs inflate volume without improving signal.

Pre: "Makes anybody an operator" — still light on public detail, but the positioning is interesting in an AI agent environment where the operator/user permission distinction is becoming structurally important.

Key Takeaway: Adobe's settlement, Digg's collapse, and NanoClaw's six-week arc are three versions of the same story. Trust infrastructure is the bottleneck of 2026 — in subscription billing, in community platforms, in AI agent execution environments. The builders who will compound this year are the ones treating trust as an engineering problem, not a marketing one.

SEO & Search Ecosystem

Google AI Citation Collapse: Top-Ranked Pages Have Lost Half Their Influence

Pages ranking in Google's top 10 are now cited by AI Overviews only 38% of the time, down from 76% previously — a direct halving of the correlation between ranking and citation (ALM Corp, March 2026). The new citation leaders: Wikipedia at approximately 45% and Reddit at approximately 30% of all AI Overview references. This confirms what many SEOs suspected but couldn't prove: Google's AI layer is not pulling answers from ranked pages — it's going directly to community-validated authority platforms.

The self-preference dimension compounds this. Google's AI Mode now references its own properties — YouTube, Maps, Knowledge Graph — in 17.42% of answers, up from just 5.7% in June 2025 (Search Engine Land, March 2026). The search engine is using its AI layer to create a closed information loop. Third-party content optimized for Google's ranking algorithm now competes against Google's own assets in a game where Google controls the rules.

Industry-level data from Seer Interactive shows the AI Overviews impact varies dramatically by vertical. Education queries saw AI Overview coverage jump from 18% to 83%. B2B Tech: 36% to 82%. Restaurants: 10% to 78%. The meaningful resistance zones are brand searches and local queries, where AI Overview triggers remain below 5%. For site owners with brand equity and local presence, this is the most defensible traffic segment right now.

The toll on publishers is no longer incremental — it's structural. Digital Trends saw monthly traffic collapse from 8.005 million clicks to 264,000, a 97% decline (position.digital, 2026). Media executives globally project a 43% drop in search referral traffic within three years (Reuters Institute, January 2026). The zero-click rate directly attributable to AI Overviews sits at 72% (Search Engine Land / Seer Interactive). These aren't projections — this is the current state of the market.

The March 2026 core update added another layer of complexity. Google issued its first-ever Discover core update alongside the broader algorithm rollout, affecting 800 million+ users. Sites with strong E-E-A-T signals saw average visibility improvements; AI-generated thin content continues absorbing ranking penalties. But the core update matters less now than the AI citation question — ranking is a prerequisite, not a destination.

Builder Insights

Digg's collapse is a live case study in domain authority weaponization

Kevin Rose and Reddit co-founder Alexis Ohanian relaunched Digg publicly January 14, 2026. It shut down March 13 — less than two months of public operation — after what CEO Justin Mezzell described as an AI bot invasion that made the platform's engagement untrustworthy. His statement was unusually direct: "When the Digg beta launched, we immediately noticed posts from SEO spammers noting that Digg still carried meaningful Google link authority. Within hours, sophisticated AI agents and automated accounts were running rampant." The team banned tens of thousands of accounts and deployed multiple AI defense systems, but couldn't restore confidence in vote and comment authenticity.

The lesson is structural: historical domain authority is no longer a moat — it's a targeting signal. The infrastructure cost of AI-powered SEO spam has dropped to near-zero. Any dormant platform with accumulated link equity will face coordinated bot pressure within hours of reactivation. Community trust systems can't be inherited from a domain's past — they require active construction and ongoing defense. Kevin Rose is returning full-time April 1 to rebuild.

SerpApi vs. Reddit: The open-web data war reaches court

SerpApi filed to dismiss Reddit's amended DMCA complaint in March 2026, with three core arguments: Reddit doesn't own most of the user content at issue (Reddit's own terms of service grant only a non-exclusive license, with users retaining copyright); SerpApi accessed Google Search public pages, not Reddit directly; and snippet data — dates, short fragments, addresses — isn't copyrightable under current law (Search Engine Land). If the court agrees, it would legally affirm third-party access to public content via Google's index — a significant precedent for the entire SEO data tooling industry. Note that Google separately sued SerpApi for alleged circumvention of licensed content protections. Both cases circle the same fundamental question: who controls access to publicly indexed content on the open web.

AEO & AI Search Watch

The AI chatbot citation picture is shifting alongside Google's own changes. ChatGPT holds 68% of AI chatbot market share, down from 87.2%. Gemini climbed to 18.2%, up from 5.4% (Q1 2026). For content strategy, this split matters: Gemini is more tightly integrated with Google's Knowledge Graph and favors E-E-A-T signals consistent with Google's own ranking criteria, while ChatGPT pulls more from licensed web content via its OpenAI data partnerships. Perplexity's Ask 2026 developer conference launch of Perplexity Finance — tapping FactSet, S&P Global, and SEC filings — signals that AI search is moving toward premium structured data. For content creators relying on freely indexed general content, AI systems are increasingly prioritizing institutional and community-verified sources over individual publisher content.

Strategy

The optimization target has shifted: ranking is a prerequisite, being cited is the goal.

Structure every key article with a definitive answer in the first paragraph, in "X is Y" format. AI models extract primarily from the first 150 words — if content buries the conclusion, it won't be cited regardless of overall quality. Follow every data point with a source in parentheses; citability correlates with verifiability.

Build presence on Reddit, Wikipedia, and Quora for topics where AI citation coverage matters. These platforms currently dominate AI reference sources at 45% and 30% respectively. Contributing genuinely useful information to Reddit threads and Wikipedia talk pages in relevant categories is now a direct SEO strategy, not a side project.

Focus brand-building on brand search terms and local queries, where AI Overview coverage remains under 5%. This is the most defensible traffic category in the current environment — AI doesn't summarize brand intent the same way it summarizes informational queries.

Track Gemini citation rates separately from ChatGPT. The two systems favor different content signals, and optimizing for one may not transfer to the other. As Gemini's market share grows, E-E-A-T alignment with Google's criteria becomes more valuable.

Zero-click doesn't mean zero opportunity. Brands that appear in AI Overviews see 35% higher organic CTR and 91% higher paid CTR on queries where they are cited versus where they are not (ALM Corp, 2026). Being cited in AI answers is the new position one — and it's won through authority signals, not keyword density.

Today's Synthesis

Tobias Lütke, CEO of a company worth over $100 billion, used AI-assisted automated research to submit a single pull request that made Shopify's template engine 53% faster with 61% fewer memory allocations — 120 experiment loops, one person, no team required (source: Simon Willison, March 13, 2026). The same day, xAI — valued at $1.25 trillion after merging with SpaceX — publicly admitted its architecture was built wrong, with 10 of its 12 co-founders gone and its flagship Macrohard agent project scrapped entirely (source: CNBC/Bloomberg).

One individual with AI tools produced a measurable infrastructure improvement at production scale. A trillion-dollar organization with massive compute investment produced an admission of failure. That asymmetry is the defining characteristic of where AI competition actually stands in March 2026.

The pattern repeats across every domain covered today. Digg had two decades of accumulated Google domain authority — a textbook moat by pre-AI standards. Within sixty days of relaunching, AI-powered spam bots overwhelmed every defense system the team deployed, making community engagement untrustworthy. The platform shut down. CEO Justin Mezzell's assessment was blunt: trust, once broken by automated manipulation, couldn't be restored with automated defenses. Kevin Rose is coming back to rebuild from scratch in April, with a smaller team.

Google's own search environment tells a parallel story. ALM Corp's March 2026 data shows pages ranking in Google's top 10 are now cited by AI Overviews only 38% of the time, down from 76%. Meanwhile, Google's AI Mode citations of its own properties — YouTube, Maps, Knowledge Graph — tripled from 5.7% to 17.42% in nine months (Search Engine Land). The search engine is building a closed information loop. Accumulated SEO authority is depreciating in real time, not because the content got worse, but because the system that used to reward it has changed what it values.

Morgan Stanley's research note adds a physical dimension to what has been an almost entirely computational discussion. Their Intelligence Factory model projects a 9-18 gigawatt US power shortfall through 2028, representing 12-25% of projected demand. The bottleneck in AI infrastructure has shifted from compute to electricity. Developers are already converting Bitcoin mining facilities into AI compute centers and deploying fuel cells. Even companies with sound architecture face a hard physical constraint that fundraising cannot immediately solve.

What connects Lütke's PR, xAI's architectural failure, Digg's collapse, and the power grid projection is a single structural truth: accumulated resources — capital, compute, domain authority, search rank — are depreciating faster than they can be deployed. xAI raised at trillion-dollar valuations before the architecture worked. Digg inherited domain authority that attracted attackers instead of users. Google's top-ranked pages lost half their AI citation value. Morgan Stanley says even the physical infrastructure can't keep pace with demand.

The counterweight to all of this is what Lütke demonstrated: an individual with the right tools and a clear problem produced more measurable value than organizations spending orders of magnitude more. NanoClaw tells the same story from a different angle — a weekend project that accumulated 22,000 GitHub stars and a Docker enterprise partnership in six weeks, while the founder's previous startup with conventional GTM couldn't move that fast.

AI is not making institutions more powerful. It is making individuals more leveraged and institutions more fragile. The organizations that survive this transition will be the ones that operate more like Lütke writing a PR than like xAI burning through co-founders — small surface area, tight feedback loops, outcomes measured in commits rather than press releases. The ones that don't will keep discovering, at increasingly expensive scale, that the foundation matters more than the valuation sitting on top of it.

Comments on "Zecheng Intel Daily | March 14, 2026 Saturday": 0