Quick Overview

- AI & Builders: Microsoft is evaluating legal action against OpenAI and Amazon over an exclusive AWS cloud distribution deal that may breach 2019/2023 contracts; Nvidia CEO Jensen Huang confirmed at GTC that H200 chips have resumed sales to China, with Bytedance and Alibaba each ordering 200K+ units, ending a 10-month freeze.

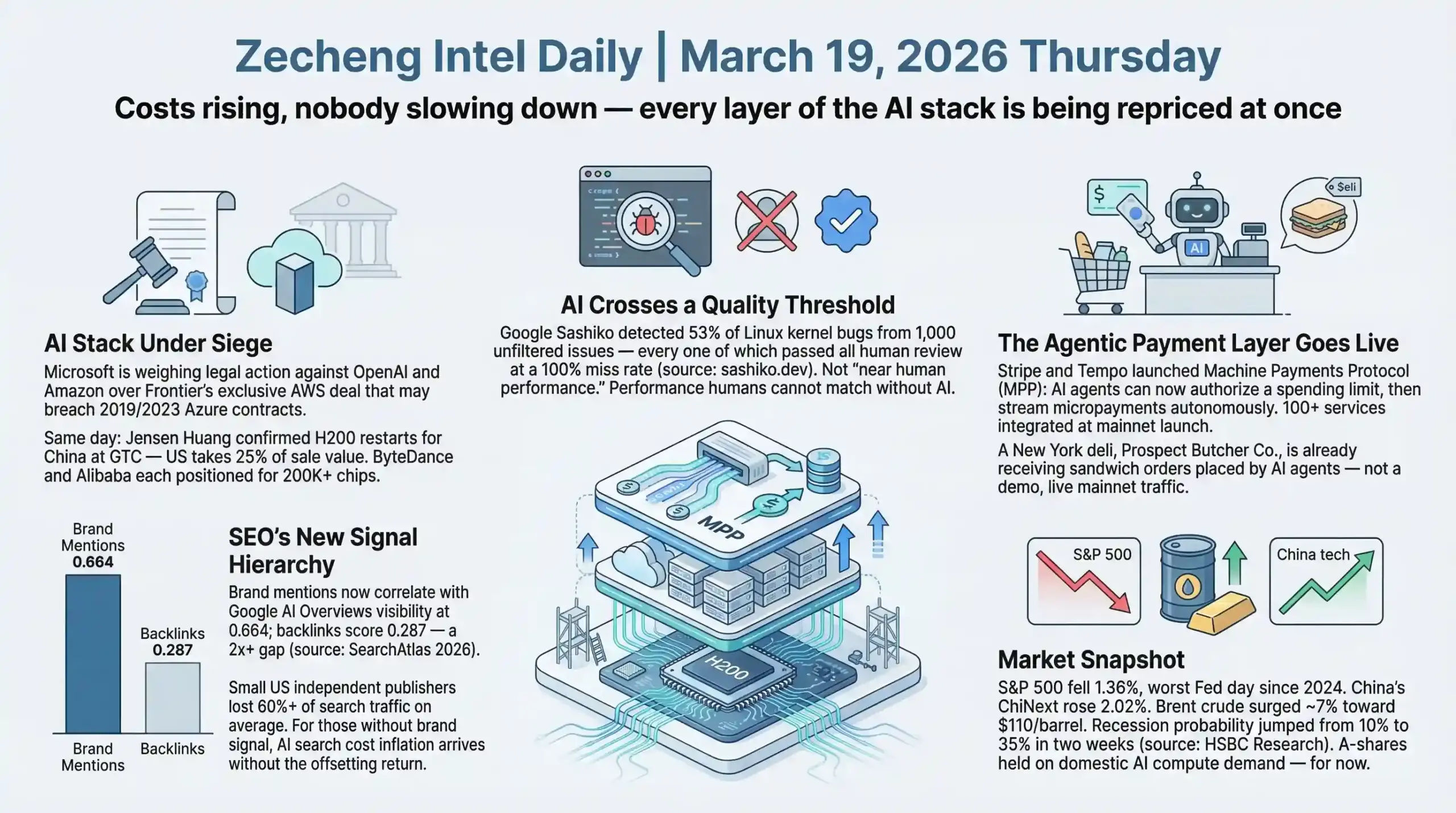

- SEO & Search: Google AI Overviews now appear in 14% of shopping queries (Quantifimedia), up from 2.1% in November 2025; brand mention correlation with AI Overview visibility stands at 0.664 versus 0.287 for backlinks — a 2.3x gap that is reshaping off-page SEO strategy (SearchAtlas 2026 Off-page Signals Report).

- Startups & Reddit: Stripe and Tempo launched Machine Payments Protocol (MPP), the first open standard enabling AI agents to pay autonomously via sessions-based micro-payments; Replit closed a $400M Series D at a $9B valuation — triple its $3B mark from six months ago — signaling that vibe coding is now a serious asset class.

AI & Technology

Headline: Microsoft Draws Legal Line Over OpenAI's $50B AWS Deal — Every Layer of the AI Stack Is Now Contested

Microsoft is weighing legal action against OpenAI and Amazon over a deal making AWS the exclusive third-party cloud distributor for OpenAI's Frontier enterprise platform. Amazon committed up to $50 billion to OpenAI — $15 billion already deployed as part of the $110 billion February 2026 funding round — with the remainder contingent on future milestones including a possible IPO. The dispute centers on Microsoft's 2019 and 2023 agreements with OpenAI, which specify that "API products developed with third parties will be exclusive to Azure." Microsoft's internal position, reported by FT: "If Amazon and OpenAI want to take a bet on the creativity of their contractual lawyers, I would back us, not them."

OpenAI's counter: Frontier is a distribution layer, not a direct API product. Stateless API calls from Amazon and third parties still route through Azure, keeping the arrangement technically within contract bounds. The argument sounds like it was written for a courtroom, not a partnership. Whether it holds legally is untested. What's already established is the structural contradiction: OpenAI is simultaneously bound to Microsoft ($13B invested), Amazon ($50B committed), Nvidia, and SoftBank — all competing interests. Whoever controls API call routing controls both the enterprise revenue stream and the data flow. Frontier's exclusive AWS distribution is cutting directly into what Microsoft staked its AI bet on.

Simultaneously, Jensen Huang confirmed at GTC on March 17-18 that Nvidia is restarting H200 chip production for Chinese customers after receiving US export licenses. His exact words: "We've been licensed for many customers in China for H200. We have received purchase orders from many customers, and we're in the process of restarting our manufacturing." (Source: CNBC) This ends roughly 10 months of advanced chip export freeze. The licensing framework, formalized in January 2026, requires the US government receive 25% of H200 sale values. ByteDance and Alibaba are each reportedly positioned to order 200,000+ chips pending Beijing confirmation (Source: Tom's Hardware). Blackwell and Rubin series remain banned.

China market signal: Alibaba Cloud GPU rental prices rose 15-30% month-on-month by late February 2026 as domestic supply remained constrained before the restart (Source: Futunn). The H200 return doesn't eliminate China's domestic chip development trajectory — it reframes the timeline. Short-term compute access improves; long-term sovereign infrastructure development doesn't reverse when the import window reopens.

These two stories are different views of the same structural shift: the era of AI companies cooperatively setting infrastructure terms is over. Chips, cloud routing, and payment rails are all actively being renegotiated simultaneously. Who wins the consumer layer matters less than which layer of the stack each player ends up locking down.

Builder Insights: Claude Code vs Windsurf 2026, Karpathy's Autoresearch, and the Agent Orchestration Layer

The dev tool tier list has reached temporary equilibrium

A cross-creator consensus from mid-March 2026 points to a stable structure: Windsurf #1 IDE with Arena Mode (side-by-side model comparison) and Plan Mode (structured task decomposition); Claude Code leading on complex tasks — large-scale refactors, cross-codebase migrations; Cursor #3 with redesigned multi-agent parallel execution. Gemini 3.1 Pro enters at equivalent pricing ($2/$12 per million tokens) as a cost-neutral frontier alternative. Claude Sonnet 4.6 is now the free default on claude.ai. 92% of US developers use AI coding tools daily, 82% globally at least weekly (Source: SecondTalent 2026 research).

The more significant development is the multi-agent orchestration layer now building out in parallel. Gas Town operates as a high-throughput orchestration engine: a "Mayor" agent distributes work while "Deacon" monitors system health, designed for dozens of parallel agents executing simultaneously. Antigravity runs multiple agents concurrently across editor, terminal, and browser — you define a mission, agents coordinate to execute it in parallel. Vibe Kanban applies a Kanban board where each task runs in an isolated Git worktree.

These tools aren't competing with IDEs. They're building the management infrastructure for parallel agent workforces. The unit of AI-assisted development is shifting from "one agent, one task" to "coordinated team of specialized agents." Workflow design — how you decompose work, manage agent parallelism, verify outputs — is the emerging skill that compounds over time.

Andrej Karpathy open-sources autoresearch — AI running ML experiments autonomously overnight

Karpathy published autoresearch in early March 2026: a 630-line Python script letting an AI agent autonomously run machine learning experiments on a single GPU. The loop: agent reads its own source code → forms improvement hypothesis (adjust learning rate, change architecture depth) → modifies code → runs 5-minute experiment → evaluates whether the result improved → retains or discards → repeats. Target throughput: approximately 12 experiments per hour, roughly 100 overnight.

The individual tool matters less than what it signals. AI self-directed scientific experimentation has moved from theoretical to "clone and run tonight." ML research timelines that assumed human-paced experimental cycles need to be revisited. For researchers, the capability debate is settled. What remains open is who frames the research agenda — experimental design, not execution. The first teams that deploy autoresearch-style tooling systematically will build a methodological advantage that compounds over time and is hard to close from behind.

Non-technical designer ships a complete RPG game with Claude — the new zero-barrier benchmark

Ben Shih (designer at Miro, no engineering background) built LennyRPG using Phaser 3, RPG-JS, Supabase, and Claude: a Pokémon-style battle game where players use product knowledge to defeat podcast guests, drawing from 300+ processed episode transcripts (Source: Lenny's Newsletter, March 18, 2026). Two workflow insights worth extracting: first, Shih used Claude to conduct his own PRD interview, finding "AI asks questions I wouldn't have thought to ask myself" — the AI-as-requirements-analyst pattern; second, Claude handled all batch transcript processing at scale, replacing what would previously have required engineering resources.

The vibe coding trend 2026 narrative often focuses on speed. The more durable signal here is range. The people who can now build software aren't just experienced engineers moving faster — they're people who were previously excluded from the execution layer entirely. That changes what gets built and who builds it.

Greg Isenberg's 10-step AI-first SaaS framework

Went viral mid-March 2026. The framework: (1) identify a giant market and drill to micro-niche; (2) map the end-to-end workflow; (3) find "money moments" — where payments occur, contracts get signed; (4) quantify pain in daily time/money cost; (5) identify all repetitive mechanical tasks; (6) execute manually first — build a service before automating it; (7) document everything, separating judgment calls from mechanical execution; (8) automate mechanical tasks using agents connected to real tools (email, Slack, Stripe, CRM APIs — not sandbox demos); (9) build a tool wrapper around the service; (10) distribution.

Step 8 is the operationally critical one: the framework requires real API integrations, not demonstration environments. The distinction matters because agents connected to sandboxed workflows produce demos; agents connected to live systems produce businesses. Greg's stated view: 99% of MVPs in 2026 won't need VC to reach PMF. Lovable's $200M ARR with fewer than 100 employees in roughly one year is the empirical reference point making that claim credible rather than aspirational.

Anthropic Dispatch: control Claude on your Mac from a mobile device

Anthropic launched Dispatch (research preview) on March 17 — Max subscribers can control a sandboxed Claude Cowork session on their Mac from any mobile device, paired via QR code. The AI accesses local files, connectors, and plugins on the desktop and executes tasks in the background. The Mac must remain awake with Claude open; early testing showed approximately 50% task success rate.

The strategic framing is straightforward: OpenClaw's institutional footprint has grown enough that Anthropic needed a mobile-native response. Dispatch extends "assign background work to AI" from desktop to pocket. The 50% success rate in research preview is a reality check on how far agentic reliability still has to go — but the interaction model (remote task delegation to a persistent AI session) is the right direction regardless of current execution quality.

Other AI Updates

Google Sashiko: AI detects 53% of Linux kernel bugs that human reviewers missed at a 100% rate

Google's Linux kernel team open-sourced Sashiko (Apache 2.0) — a multi-agent system monitoring the Linux Kernel Mailing List and reviewing all proposed patches. Detection result: 53% of bugs from an unfiltered set of 1,000 recent upstream kernel issues tagged "Fixes:" — every one of which passed all human review before merge (Source: sashiko.dev). The five-agent architecture covers: architectural correctness, code-vs-commit-message verification, C execution flow tracing, memory safety (leaks, use-after-free, double frees), and concurrency (deadlocks, RCU violations, thread-safety). Primary model: Gemini 3.1 Pro, with Claude and other LLM support.

A 53% detection rate against a 100% human miss rate is not incremental improvement — it's a qualitative threshold. When AI outperforms complete expert human review on safety-critical infrastructure code in the Linux kernel, the question shifts from "should we use AI for code review" to "what is the liability framework for critical software that wasn't run through AI review systems."

Perplexity Comet arrives on iPhone — free, browser-as-AI-interface

Perplexity's Comet AI browser launched on iPhone on March 18 (one week delayed from March 11), free and consistent with the Android version. Features: contextual questions about any webpage, voice mode, cross-tab queries. Now live across iPhone, Android, Windows, Mac. Perplexity surpassed 1 billion monthly queries and closed a $400M Series E at a $24B valuation (Source: company announcement). The strategic move positions Comet against Safari and Chrome directly — making the browser itself the AI interface rather than a search layer operating within a browser. That's a fundamentally different product thesis than "better search."

Stripe + Tempo launch Machine Payments Protocol (MPP) for AI agent transactions

Stripe and Tempo launched MPP on March 18 — an open standard for AI agents to transact in fiat and cryptocurrency (Source: Stripe Blog). The core innovation: a "sessions" primitive where agents authorize a spending limit upfront, then stream micropayments continuously, with thousands of micropayments aggregated into a single on-chain settlement. 100+ services integrated at launch including compute providers, data platforms, and infrastructure services. Visa extended MPP to cover card and wallet payments; Lightspark extended it to Bitcoin Lightning. Tempo went live on mainnet simultaneously, valued at $5B on $500M raised (Series A led by Thrive Capital, Source: Fortune).

MPP fills the foundational gap in agent economics: autonomous AI agents previously couldn't transact without human-in-the-loop payment authorization. The sessions primitive — spending authorization upfront, continuous micropayments without per-transaction overhead — is the right architecture for how agents actually need to operate. Whether MPP becomes the standard or gets displaced by a competing protocol is secondary to the fact that the payment layer for agent-to-service transactions is now being built.

UK government reverses AI copyright position after artist backlash

The UK government abandoned its plan to allow AI training on copyrighted materials without consent on March 18, reversing a position from weeks earlier. The original Data Bill gave creators only an opt-out clause. The reversal followed sustained opposition from Elton John ("absolute losers"), Paul McCartney, and Dua Lipa. Technology Secretary Liz Kendall: "We have listened. We no longer have a preferred option." (Source: BBC) The government will take time to balance creator and tech industry interests.

This is the first meaningful government capitulation on AI training data terms from a major jurisdiction. Every legislative body now drafting AI policy will see this outcome. The opt-out model failed politically in the UK in weeks. The likely policy trajectory going forward — across jurisdictions — shifts toward some form of opt-in or compensation framework, which would structurally raise the cost of training data at scale. That changes the economics of every organization building foundation models.

Analysis

Three infrastructure stories from March 18-19 reveal where the AI industry actually is beneath the product announcements: Microsoft vs. OpenAI-Amazon (cloud routing control), Nvidia H200 China restart (chip supply chain), and Stripe MPP (payment rails for agents). Each represents a different foundational layer, and all three are being renegotiated simultaneously. The pattern: AI application capability has matured faster than the supporting infrastructure has stabilized, so every foundational layer is now being actively contested and locked down.

The Sashiko result deserves attention disproportionate to its current coverage. A 53% detection rate on Linux kernel bugs that experienced maintainers missed entirely isn't a benchmark improvement — it's a category boundary crossed. The Linux kernel underpins most of the world's servers; bugs there have global security implications. When AI crosses a detection quality threshold in that domain, the question changes for the entire software industry: not whether to use AI for safety-critical code review, but what the standard of care looks like going forward for critical systems that skip it.

For builders working with the Claude Code vs Cursor vs Windsurf 2026 toolkit: the tier ranking is stable for now, but the real action is at the orchestration layer. Gas Town, Antigravity, and Vibe Kanban aren't IDE competitors — they're building the management infrastructure for parallel agent teams. As multi-agent workflows become standard, the skill that compounds isn't prompt quality or model selection — it's system design: how you decompose work across agent boundaries, how you manage parallelism, how you verify outputs from systems you don't fully control.

The UK copyright reversal is a precedent signal, not just a UK policy story. Opt-out failed politically within weeks. Every jurisdiction now drafting AI legislation will reference this outcome. The likely downstream effect: some form of opt-in or licensing compensation framework becomes the political floor. That raises training data costs structurally across the industry and creates an opening for approaches — synthetic data, curated licensed datasets, model distillation — that don't depend on scraped web content at scale.

Business & Startups

Stripe's Machine Payments Protocol: The Payment Layer for Agentic Commerce Just Went Live

Stripe and blockchain startup Tempo launched the Machine Payments Protocol (MPP) on March 18, the first open standard built for AI agents to transact autonomously without human intervention (source: Stripe official blog, Fortune).

The core mechanism is a construct called "sessions": an AI agent pre-authorizes a spending cap, then makes continuous streaming micro-payments within that budget—no per-transaction approval required. Visa supports card payments, Lightspark handles Bitcoin Lightning, and Stripe consolidates stablecoins, fiat, and BNPL through Shared Payment Tokens (SPT). Real transactions are already running on mainnet. Browserbase charges agents per session to spin up headless browsers. PostalForm lets agents pay to print and mail physical letters. A New York deli, Prospect Butcher Co., is receiving sandwich orders placed by AI agents.

The business logic here isn't subtle. Stripe is positioning itself as the toll layer of the agentic economy—whoever controls the sessions primitive standard collects fees on every autonomous transaction. With OpenAI, Google, Perplexity, and Anthropic all building shopping agents, the infrastructure play is structurally safer than betting on which AI wins the consumer layer. Stripe builds the roads; it doesn't need to care who drives on them.

AI agents already buy things. The gap now is whether product data is structured for machine consumption. Clean schema markup, standardized product feeds, and API-accessible SKU data are moving from SEO optimization targets to baseline requirements for appearing in the AI agent buying funnel at all.

One data point that puts this in context: Google AI Overviews now appear on 14% of shopping queries, up from 2.1% in November 2025—a 5.6x increase in four months (source: Visibility Labs, analysis of 20.9 million shopping-intent keywords, published March 18). The click-to-product-page assumption is eroding fast. MPP adds another downstream step: agents that can now complete a purchase without a human ever touching a checkout page. The full circuit—AI intercepts search intent, AI browses and compares, AI pays—just closed.

Reddit Pain Point Analysis

The consistent thread across r/Entrepreneur, r/SaaS, and r/SideProject this week: builders are burning out trying to serve everyone instead of owning one specific workflow.

One thread in r/juststart cut through with a deceptively simple frame: "The fastest path to $5K/month isn't a revolutionary idea. It's a boring tool that saves someone 4 hours a week." The highest-voted replies sharpened it further—the real filter isn't just "does it save time" but "who exactly are you saving it for." Operators and local service businesses have different automation thresholds than software-native founders. The consistent takeaway from comments: niche specificity is the product strategy, not just a marketing positioning choice.

Three recurring pain signals worth tracking: Vertical SaaS keeps winning over horizontal ambition—builders who narrowed from "CRM for everyone" to "CRM for pest control companies" or "contracts for solo architects" consistently report faster initial sales cycles and lower churn. Pricing model anxiety around annual subscriptions is back—monthly billing is resurging as a conversion lever for products with unpredictable usage patterns, with some builders reporting higher trial conversion rates after switching from annual-first despite the LTV trade-off. And the AI SOP maintenance gap remains unseized—teams use Loom and AI transcription to document workflows, but keeping those docs current as products change is still manual. Multiple threads flagged the same unseized niche: screen-record once, AI generates the SOP, AI detects when the UI changes and flags documentation as stale.

The repetition of these pain signals across different communities is itself a market signal. Boring, specific, workflow-integrated tools are consistently the ones indie hackers report actually monetizing.

Builder Updates

@blvckledge: Scaling Google Shopping from $10K to $300K+/Month with Product Duplication

E-commerce advertiser @blvckledge shared a Google Shopping scaling tactic deployed across client accounts over five years: product duplication. The approach involves creating multiple variants of the same product within a single account, giving Google's algorithm more test surfaces for bidding strategy and audience matching rather than concentrating all budget on one SKU. His accounts scaled from $10K/month to $300K+/month using this method (source: @blvckledge on X). The structural logic aligns with how Google Shopping's multi-variant optimization works in practice—more SKU-level data gives the algorithm more handles. One caveat: duplicated products need clean canonical structure to avoid cannibalizing organic rankings, which can offset paid gains elsewhere.

Facebook Creator Fast Track: Up to $3,000/Month Guaranteed Income

Facebook launched Creator Fast Track on March 18 targeting creators with existing audiences on any major platform: $1,000/month guarantee for 100K+ followers, $3,000/month for 1M+ followers, for a three-month window. After that, creators enter Facebook's standard Content Monetization program with permanent traffic weighting preserved (source: Meta official blog, March 18). Context: Facebook paid out nearly $3 billion to creators in 2025, a 35% year-over-year increase, with roughly 60% from Reels. The strategy is direct—acquire proven creators from TikTok and YouTube at a fixed cost before organic growth takes hold. For bootstrapped content creators, three months of guaranteed floor income is useful runway for product experiments. After that, it's Facebook's native algorithm.

ProductHunt & Indie Highlights

Perplexity Comet for Enterprise launched on ProductHunt—a secure AI browser built for enterprise teams with access controls, data governance, and centralized billing. Positioned against the complexity of deploying Chrome Enterprise plus a separate AI layer, targeting mid-market companies that need AI browsing without heavy IT overhead.

Rebel Audio launched as an all-in-one AI podcasting tool for first-time creators, covering recording, editing, social clipping, and publishing within a single platform (source: TechCrunch, March 18). Pricing and early traction numbers not yet public.

Claude Dispatch allows users to text Claude from their phone via messaging without opening an app. The distribution angle is notable: meets users in existing SMS/messaging behavior rather than requiring a new app habit. Lightweight entry point for non-power users who want occasional AI access without behavioral friction.

Key Takeaway

Stripe's MPP and Google AI Overviews reaching 14% of shopping queries are two different types of signal arriving in the same week—one is infrastructure, one is adoption data. Together, they describe the same structural transition: the buying funnel is becoming machine-readable from end to end. The discovery layer (AI Overviews) already intercepts human search intent before the product page. The payment layer (MPP) now completes the circuit. For builders and operators in e-commerce or SaaS distribution, the positioning question is already settled — AI shopping agents exist and are live on mainnet. What's open is whether product data, pricing structure, and purchase flow are legible to machines. That window, before the transition hits mainstream volume, is probably 12 to 18 months wide. The platform bets are clear: Stripe owns payments, Google owns discovery, Replit's $9B valuation signals that even software creation is being commoditized. The independent builder advantage is still speed and specificity—but the infrastructure layer is consolidating fast.

SEO & Search Ecosystem

Brand Mentions Now Outweigh Backlinks for AI Search Visibility — And the Data Proves It

The off-page signal hierarchy has been rewritten. Brand mentions now account for 55% of off-page ranking signals, surpassing backlinks at 45%, according to SearchAtlas's 2026 Off-page Signals Report. More striking: when measuring correlation with Google AI Overviews visibility specifically, brand mentions score 0.664 versus backlinks at 0.287 — more than a 2x gap. This is not directional trend talk. It's measured correlation from live search data.

For anyone still allocating most of their link-building budget toward traditional backlink acquisition and expecting AI search visibility in return, the math no longer works.

The timing matters. Google AI Overviews have now expanded to approximately 14% of shopping-related queries (source: Quantifimedia / Google data), meaning the reach of AI-generated summaries is moving beyond informational content into commerce. When a user searches for "best noise-canceling headphones under $200" and gets a curated AI answer with direct merchant links, the affiliate review site that ranked #1 for that query is now invisible to a meaningful slice of that traffic. The purchase decision happens upstream.

The downstream effect is measurable: small independent publishers in the U.S. are reporting search traffic declines averaging more than 60% over the past six months (source: Search Engine Land 2026). These are often quality sites — the problem isn't content, it's that they have no brand signal, and therefore no AI citation footprint.

The Google March 2026 Core Update continues rolling out (day 19+ of its window), targeting unmoderated AI-generated content at scale and further tightening E-E-A-T signals. Sites with original research and first-hand data are gaining an average of 22% organic visibility (sampled across 32 B2B SaaS sites, source: Quantifimedia). The algorithmic pressure and the AI citation dynamic are reinforcing the same direction: brand with real expertise wins, anonymous content farms don't.

On the tooling side, Google Search Console quietly rolled out Branded Queries Filter to all properties on March 11. It automatically separates branded and non-branded traffic in the Performance Report — a more valuable signal than many realize. If your organic traffic is declining, the filter answers which problem you're actually solving: a brand awareness problem, or a discovery traffic problem. Those require completely different responses.

Builder Insights

Ahrefs broke down the AI citation landscape in their latest video "SEO in 2026: How I'd Rank in Google in the AI Era," and the per-platform sourcing differences are sharper than most people assume. Google AI Overviews draw heavily from YouTube, Reddit, and Quora. ChatGPT favors publishers and news outlets. Perplexity leans toward niche and regional sites. The overlap at the top? Only 14% — 86% of the highest-cited sources are unique to each platform.

The practical implication: a single content strategy cannot optimize for all three simultaneously. Ahrefs' recommended framework is to use Brand Radar to identify exactly which pages each AI platform is citing in your niche, then filter for "best," "vs," "review," and "alternative" modifiers — these are the fanout query pages AI assistants prioritize when assembling answers. Getting brand mentions embedded in those specific pages is higher leverage than producing new content from scratch.

One number worth keeping: among URLs cited by ChatGPT, Perplexity, and Copilot combined, only 12% also appear in Google's traditional top 10 results. Traditional search rank and AI citation are almost entirely separate signal pools.

AEO & AI Search Watch

Perplexity's citation rate sits at 97% — it sources almost every answer. ChatGPT's is 16%. That gap is the clearest reason why Perplexity-first content structuring (clear answers in the first 150 words, structured headers, verifiable data with source labels) is the highest-ROI GEO bet right now. Gartner projects traditional search engine traffic will decline 25% through 2026. Brands investing in GEO alongside traditional SEO are seeing 30-40% higher AI-sourced referral traffic than those who aren't (source: Gartner / upGrowth 2026). The divergence between AI citation leaders and laggards is already compounding.

Strategy: The safest ground in current search is action-intent queries — shopping, tool access, booking, calculators. AI Overviews rarely appear here because users want to complete a task, not read a summary. Concentrate content investment on this intent category. In parallel, build brand signal through Reddit, YouTube, and vertical publications — not because it directly lifts rankings, but because those are exactly the surfaces AI assistants cite. GSC's Branded Queries Filter, checked weekly, reveals whether brand signal is growing fast enough to offset discovery traffic erosion from AI Overviews. When non-branded clicks fall faster than branded ones rise, that's a structural deficit — publishing more content won't close it.

Today's Synthesis

Every cost layer of AI infrastructure is inflating simultaneously — compute, energy, capital — yet adoption is accelerating into the headwind, not retreating from it. That divergence is the most important signal buried in today's data.

Alibaba Cloud raised AI compute pricing by up to 34%. Baidu followed within four hours, hiking 5-30%. Together with Tencent's earlier move, all three major Chinese cloud providers have now raised prices for the first time in 20 years of the country's cloud market (source: official announcements). On the same day, Brent crude surged roughly 7% toward $110/barrel as Hormuz Strait transit dropped 98% below normal (source: Goldman Sachs). US diesel broke $5/gallon for the first time since the Russia-Ukraine war. And Powell's press conference crashed full-year rate cut probability from 100% to roughly 50%, with rate hikes explicitly back in committee discussion (source: CME swap pricing, Fed SEP).

Compute costs up. Energy costs up. Cost of capital up. Three input layers tightening at once — a degree of cost convergence that hasn't occurred since AI industrialization began.

And yet: 92% of US developers now use AI coding tools daily (source: SecondTalent 2026). Replit tripled its valuation from $3 billion to $9 billion in six months (source: TechCrunch). Stripe launched MPP, an open standard for AI agents to transact autonomously — and a New York deli is already accepting sandwich orders placed by AI agents on mainnet. Nobody is slowing down.

The reason is that AI productivity gains are outrunning cost inflation by a wide enough margin to make the price increases irrelevant to the adopters. Google's Sashiko found 53% of Linux kernel bugs that every human reviewer missed at a 100% rate (source: sashiko.dev). Karpathy's autoresearch runs autonomous ML experiments at 12 iterations per hour overnight with a 630-line script. A Miro designer with zero engineering background shipped a full RPG game using Claude and Supabase. These are not "15% faster" stories. They are "previously impossible, now routine" stories. When productivity jumps by category rather than degree, a 34% compute price hike barely registers as friction.

That logic only holds for one side of the divide — and the other side is getting crushed. SearchAtlas data shows brand mentions correlate with AI Overview visibility at 0.664; backlinks score 0.287 — a 2x gap (source: SearchAtlas 2026 Off-page Signals Report). Small independent US publishers have lost an average of 60% of their search traffic over six months (source: Search Engine Land 2026). Google AI Overviews now cover 14% of shopping queries, a 5.6x increase in four months (source: Visibility Labs, 20.9 million keyword analysis). The entities absorbing rising costs are the ones with brand equity, structured data, and machine-readable infrastructure — Stripe capturing the payment standard, cloud providers collecting pricing power, platforms with first-party data moats. The entities being crushed are brandless small sites, information-arbitrage middlemen, and independent stores whose product data isn't legible to AI agents.

Cost inflation is not squeezing everyone equally. It is accelerating a structural split between organizations that generate enough AI-driven surplus to absorb higher input costs and those that don't generate enough surplus to offset even modest increases. The Chinese cloud pricing data from today makes this concrete: Morgan Stanley projects China's AI cloud market growing at 72% CAGR through 2029 (source: Morgan Stanley, March 16). Providers can raise prices 34% and still expect demand to accelerate, because the customers paying those prices are producing returns that dwarf the increase. The customers who can't produce those returns simply stop being customers.

What makes this moment structurally different from previous technology cost cycles is that the rising costs and the widening productivity gap are being driven by the same cause. AI is simultaneously the thing making infrastructure more expensive (token consumption tripling, chip shortages projected through 2030) and the thing making its adopters productive enough to pay the higher price. The technology is funding its own cost inflation — but only for those who can use it. For everyone else, the bill arrives without the offsetting revenue. That asymmetry, not any single product launch or policy shift, is the defining characteristic of where we are in March 2026.

This report was generated by IntelFlow — an open-source AI intelligence engine. Set up your own daily briefing in 60 seconds.

Comments on "Zecheng Intel Daily | March 19, 2026 Thursday": 0